📌 Key Takeaways

Supplier selection determines whether your amplifier launch protects brand equity or becomes a warranty liability exercise.

- Risk Reduction Before Cost Optimization: Quality system maturity, reliability testing capability, and compliance documentation function as hard gates that eliminate suppliers regardless of attractive unit pricing, because warranty exposure and schedule variance typically erase initial BOM savings.

- Four-Gate Governance Prevents Late-Stage Surprises: The Evaluate→Qualify→Pilot→Scale framework with defined acceptance thresholds forces early divergence between capable partners and build-to-print shops, compressing time-to-SOP while maintaining auditable decision criteria.

- Pilots Must Stress the System, Not Validate the Design: A properly sized pilot exercises true production throughput, shift patterns, and production tooling to reveal process capability limits that ten-unit prototype builds by senior technicians will never expose.

- Contracts Preserve Engineering Decisions: Change-control SLAs, test data access protocols, and obsolescence notification periods embedded in supplier agreements prevent informal component swaps and undocumented process changes that introduce field failures after SOP.

- Total Landed Cost Includes Hidden Risk: A 15% lower unit cost means nothing when inadequate tooling buffers force acceptance of marginal tooling, immature change control enables quality escapes, or missing CAPA history predicts warranty rates of 3% instead of 1%.

Documented weighting models and objective exit criteria replace circular debates with systematic accountability.

Product managers, sourcing leaders, and quality teams at audio and consumer electronics brands will find a structured decision framework here, setting the foundation for the detailed gate-by-gate implementation guidance that follows.

The final week before your product launch. Your amplifier supplier just flagged a component obsolescence issue that could delay production by six weeks. The MOQ for the replacement part doesn’t align with your order volume, and there’s no documented process for managing changes. This scenario isn’t rare—it’s predictable when supplier selection treats capability screening as an afterthought rather than a systematic risk-reduction process.

An OEM amplifier supplier is a contract manufacturer that designs, builds, and tests audio amplification products under your brand specifications. These partners handle everything from schematic refinement and PCB layout to enclosure design, regulatory compliance documentation, and final assembly. The relationship extends beyond manufacturing execution—it’s a transfer of design control, quality ownership, and warranty liability.

This framework provides a structured method for evaluating suppliers through four gates with defined acceptance thresholds. The goal is compressing time-to-SOP while protecting brand equity through auditable governance.

What an OEM Amplifier Supplier Actually Is (and Isn’t)

The terms get used interchangeably, but precision matters when assigning accountability. An OEM amplifier supplier typically operates as either a contract manufacturer executing your finalized design, or an ODM partner contributing substantive design work under your specifications and brand. Some suppliers offer both models depending on project scope.

Core capabilities include schematic design, thermal analysis, PCB layout, enclosure mechanical design, and component sourcing. Quality functions span incoming inspection, in-process quality control, final QC, and reliability testing. Regulatory expertise covers safety certifications (UL, ETL, CE), EMC compliance, and RoHS documentation.

The supplier becomes an extension of your engineering and operations functions. They hold proprietary design data, maintain your bill of materials, and own traceability records that determine your ability to manage field failures or regulatory inquiries. This isn’t a transactional vendor relationship—it’s operational integration with shared liability.

Understanding this distinction clarifies why selection criteria must prioritize governance maturity over cost minimization. The cheapest qualified supplier reduces initial outlay. The most capable supplier reduces total program risk, which includes warranty exposure, schedule variance, and brand damage from quality escapes.

The Risk-Minimized Selection Framework (Overview)

Supplier selection functions as a tollgate system with four sequential stages: Evaluate, Qualify, Pilot, and Scale. Each gate has defined inputs, acceptance criteria, and required artifacts before progression. This structure prevents the common failure mode where friendly pilot results mask systemic issues that only surface at volume production.

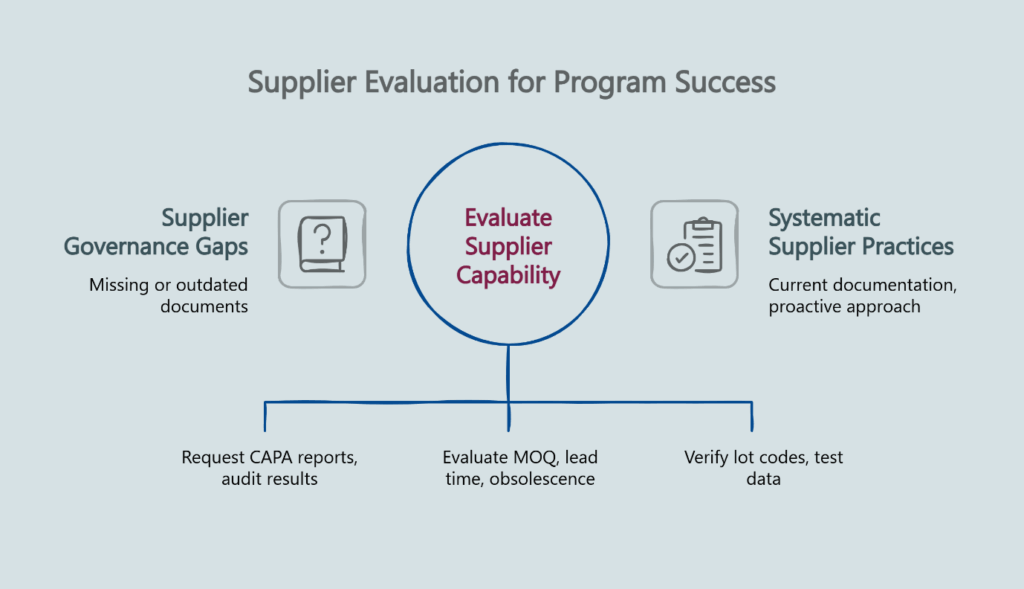

Gate 1 – Evaluate screens for fundamental capability and operational fit. You’re verifying that documented quality management systems exist, not just claimed capabilities. This gate eliminates suppliers who cannot demonstrate CAPA history, traceability protocols, or governance over incoming material quality.

Gate 2 – Qualify validates technical depth and compliance pathways. DFM review quality, thermal margin calculations, and reliability test plans get documented before tooling commitments. The acceptance threshold here is agreement on measurable performance targets, not vague assurances of “industry-standard practices.”

Gate 3 – Pilot tests whether stated capabilities predict mass-production reality. Pilot runs must be sized to exercise true manufacturing throughput, not just prove the design works in ideal conditions. Objective exit criteria—yield rates, test data distributions, stress test results—replace subjective assessments.

Gate 4 – Scale confirms readiness for SOP. Dependency mapping identifies risks to timeline. Tooling buffers, golden sample criteria, and data access protocols get embedded in the production plan and contract terms. This gate exists because many launches fail not from technical issues but from execution coordination gaps.

The framework’s value lies in making implicit assumptions explicit. When you define acceptance thresholds upfront, circular debates about “good enough” quality or “reasonable” lead times get replaced with objective assessments against agreed criteria. A documented model ends circular debates.

Gate 1 — Evaluate: Screening for Capability, Fit, and Governance

Initial screening focuses on three categories: quality system maturity, operational fit with your program parameters, and governance over supply chain inputs.

Quality management system verification requires documented evidence, not certification badges. Request the last twelve months of CAPA reports. The pattern matters more than the count—mature suppliers show decreasing recurrence of root causes over time, indicating that corrective actions actually corrected. Absence of CAPAs signals either perfect execution (unlikely) or inadequate failure detection (probable).

ISO 9001 certification serves as a useful baseline indicator of process control and continual improvement discipline, though certification alone doesn’t guarantee outcomes. The real test is whether the supplier can produce anonymized CAPA close-out reports and layered process audit results that demonstrate systematic practice rather than recently assembled responses to your inquiry.

Traceability capability determines your ability to execute targeted recalls or investigate field failures without scrapping entire production lots. Verify that lot codes link to incoming material certifications, process travelers capture in-line test data by serial number, and final QC records connect to specific operator shifts and equipment calibration dates. This granularity protects you when a single component batch proves defective.

Operational fit assessment evaluates whether the supplier’s standard practices align with your program constraints. MOQ requirements must be compatible with your volume forecast and inventory carrying costs. Lead time expectations need to accommodate your launch timeline without forcing premium expedite fees that erase cost savings.

Obsolescence management processes reveal whether the supplier monitors component lifecycle status proactively or reacts after parts go end-of-life. Request their standard notification period for obsolescence and their documented process for qualifying alternate components. Reactive suppliers force you into rushed redesigns; proactive partners give you lead time to plan transitions.

Ask how they handle incoming inspection failures. Suppliers with mature practices have documented authority to reject non-conforming material and alternate sourcing protocols that prevent schedule delays while maintaining quality standards. Those without clear authority end up using marginal components because halting the line seems worse than accepting risk.

The acceptance threshold for Gate 1 is simple: all documentation exists, is current, and demonstrates systematic practice rather than recently assembled responses to your inquiry. Missing or outdated documents indicate governance gaps that predict downstream surprises.

Gate 2 — Qualify: DFM Depth, Reliability Plan, and Compliance Path

Technical qualification validates that claimed capabilities translate into rigorous engineering practices. This gate prevents the scenario where a supplier can build your amplifier but cannot explain why their design choices ensure reliable field performance.

DFM review depth reveals engineering maturity. Basic DFM feedback addresses manufacturability—component placement for automated assembly, thermal relief on power traces, fiducial placement for optical inspection. Advanced DFM addresses reliability—derating calculations for power components, thermal margin analysis under worst-case ambient conditions, mechanical stress analysis for connectors subject to field handling.

Request specific thermal margin data: junction temperatures under continuous maximum power at specified ambient, with documented assumptions about airflow, enclosure thermal resistance, and altitude. These calculations should reference component datasheets and use conservative assumptions. Vague assurances about “adequate cooling” or “industry-standard practices” signal inadequate analysis.

Reliability testing plans should specify protocols such as HALT and HASS (Highly Accelerated Life Testing and Highly Accelerated Stress Screening). HALT identifies design margins by stressing the product to failure. HASS screens production units to catch manufacturing defects before shipping. Many suppliers claim to perform these tests; few document specific stress profiles, acceptance criteria, and sample sizes upfront.

Ask for their standard reliability test sequence: thermal cycling parameters, vibration profiles, power cycling stress, and combined environment testing. The presence of documented test plans matters more than the sophistication of the protocols—it indicates systematic practice versus ad-hoc testing when problems emerge.

Compliance pathway documentation should map regulatory requirements to specific design elements and test procedures. For USA distribution, safety listing through an OSHA-recognized NRTL such as UL or ETL becomes essential. This includes isolation test voltages, leakage current limits, temperature rise limits, and flame rating requirements for enclosures. The certification isn’t just a mark on the product—it represents a complete evidence package maintained under ongoing surveillance and follow-up services.

For CE marking, compliance documentation covers EMC test plans, essential health and safety requirements, and technical file contents. Request a sample compliance document package from a previous program. Mature suppliers maintain templates and can produce complete technical files efficiently. Less experienced partners underestimate documentation effort, causing delays when certification bodies request missing data.

The acceptance threshold for Gate 2: documented agreement on performance targets and test thresholds before any tooling expenditure. Handshake agreements about “working together to resolve issues” provide no protection when disputed test results delay launch.

Gate 3 — Pilot: Designing Runs that Predict Mass-Production Reality

Pilot runs expose process capability and identify issues that only manifest at production tempo. Undersized pilots provide false confidence; properly designed pilots stress the manufacturing system to reveal hidden dependencies and capability limits.

Pilot sizing must exercise true throughput rates, not just validate design functionality. A ten-unit pilot built over two weeks by senior technicians proves nothing about hundred-unit-per-week production by shift operators. Size the pilot to require multiple production days, multiple shifts if volume production will span shifts, and use of production tooling rather than prototype fixtures.

Define yield targets explicitly. “Acceptable” quality is not a threshold. Specify first-pass yield expectations—percentage of units completing final test without rework—and rework allowances. Track defect categories: component placement errors, solder defects, mechanical assembly issues, test failures. The distribution of failure modes indicates whether issues stem from process control problems (fixable) or design marginality (concerning).

Stress testing during pilots should exceed normal production test sequences. Subject pilot units to extended burn-in at elevated temperature, power cycling beyond datasheet limits, and mechanical shock representative of shipping and field handling. Units that pass production tests but fail stress tests indicate insufficient margin in the design or inadequate production test coverage.

Temperature cycling helps identify latent solder joint issues, especially on high-thermal-mass components like transformers and heat sinks. Vibration testing reveals mechanical assembly issues—loose screws, inadequate connector retention, resonance problems with heavy components. These tests cost more than basic functional testing but prevent the scenario where your first DOA returns trace to failures that stress testing would have caught.

Objective exit criteria replace subjective quality assessments. Define minimum first-pass yield, maximum rework rate by defect category, stress test pass rates, and required test data distributions. For example: “95% first-pass yield, less than 3% rework for placement errors, 100% pass rate on 48-hour burn-in at 50°C, thermal measurements within 10°C of simulation predictions.”

PPAP-like sign-off processes formalize acceptance. While PPAP originates from automotive manufacturing, its principles—production samples, dimensional validation reports, material certifications, and process capability data documented and approved before volume commitments—apply effectively to audio programs. This discipline prevents the drift where pilot results look adequate but were achieved through extraordinary intervention that won’t scale.

Golden sample retention provides reference units for production comparison. Keep pilot units that represent desired quality, properly labeled with test data and acceptance criteria. When production quality questions arise, golden samples provide objective comparison points.

The acceptance threshold for Gate 3: objective exit criteria met, all deviations documented with corrective actions implemented and verified, and formal sign-off by both engineering teams before scaling.

Gate 4 — Scale: SOP Readiness, Timelines that Hold, and Change Control

Transition to volume production fails not from technical issues but from coordination gaps. Gate 4 validates that execution plans address dependencies, buffers, and change control explicitly.

Dependency mapping identifies sequential gates and parallel paths on the critical timeline. Component long-lead items, tooling delivery, operator training completion, test equipment calibration, and regulatory certification all feed SOP readiness. Map which activities have slack and which are on the critical path. This clarity enables priority decisions when delays occur.

Timeline governance benefits from what many manufacturing programs call a “control tower” approach—weekly cross-functional reviews with defined escalation triggers. When a critical path item slips, the protocol for recovery planning should be clear, not ad hoc discussions about “working harder to catch up.” Documented plans with owners, dates, and contingencies replace optimism with accountability.

Tooling buffers account for iteration cycles. First-article tooling rarely produces acceptable parts immediately. Budget time for tooling adjustments based on dimensional validation results. Underbuffered schedules force acceptance of marginal tooling because correcting problems would miss launch dates.

Golden sample criteria for production units should match pilot acceptance thresholds. First production lot receives the same scrutiny as pilots—full dimensional inspection, extended burn-in, stress testing for sample units. Only after demonstrating that production quality matches pilot quality do you transition to statistical sampling plans.

Data access protocols get defined contractually. Specify formats for test data delivery, frequency of quality metrics reporting, and your right to audit test records or inspect production in-process. Suppliers resist documentation overhead, but vague agreements about providing data “as needed” create friction when disputes arise about whether quality trends warrant investigation.

Change control procedures should define approval requirements, notification periods, and validation steps for any modification—component substitutions, process changes, tooling adjustments, test procedure updates. Undocumented changes cause the most common SOP failures: a “minor” component swap introduces noise, a process optimization reduces test coverage, a tooling adjustment affects fit.

The acceptance threshold for Gate 4: SOP readiness checklist complete, all production prerequisites met with verification evidence, and formal approval to begin volume shipments. Production starts when the system is ready, not when the calendar demands it.

Decision Weighting Model: Ending Circular Debates

Qualitative assessments produce circular arguments. Quantitative weighting models force explicit trade-off decisions. Assign weights to selection criteria based on your program’s priorities, evaluate each supplier against those criteria, and calculate total scores.

Example weighting for a mid-market audio brand launching a new commercial amplifier series:

- QMS maturity and CAPA history: 35%

- Reliability data and test plan depth: 25%

- Compliance documentation capability: 15%

- Pilot performance against exit criteria: 15%

- Commercial terms (MOQ, lead time, payment): 10%

This model explicitly states that quality system maturity carries more than three times the weight of commercial terms. When a low-cost supplier with immature quality processes emerges, the scoring model prevents rationalization. Cost advantages matter, but not enough to overcome governance gaps.

Total landed cost calculations must include NRE (non-recurring engineering), tooling amortization over projected volume, and estimated warranty exposure based on historical field failure rates. A supplier quoting 15% lower unit cost but requiring $50,000 more in tooling and lacking documented reliability processes may cost more across a 10,000-unit program when warranty returns reach 3% instead of 1%.

Document the weighting model and scoring rationale. When stakeholders question supplier selection months later—during the inevitable moment when a decision gets second-guessed—the documented model provides objective justification. “We selected Supplier A because our agreed criteria weighted quality maturity at 35% and they scored highest on that dimension” ends debates more cleanly than “we had a good feeling about them.”

What to Put in the Contract to Preserve the Plan

Supplier contracts should embed the governance protocols developed during Gates 1-4. Procurement teams focus on pricing, payment terms, and liability limits. Engineering-critical clauses often get negotiated weakly or omitted entirely.

Change control SLAs define maximum approval cycles for routine changes (component substitutions within approved manufacturers list) and substantial changes (design modifications, test procedure changes). Standard language like “reasonable approval timeframe” provides no protection when the supplier seeks approval for a component swap two weeks before your launch and claims a four-week review is unreasonable.

Test data access provisions should specify data formats, delivery frequency, and retention periods. Require test data delivery within 48 hours of lot completion, not upon request. Specify that you retain rights to audit test records on-site with reasonable notice. These clauses matter when quality investigations require analyzing test distributions across multiple lots.

When a warranty spike triggers an 8D investigation (the structured eight-discipline problem-solving methodology), documented data access rights enable rapid root cause analysis. Because data rights and turnaround times were specified upfront, the supplier provides logs and failure analysis results within the agreed SLA, allowing targeted corrective action rather than broad, brand-visible disruptions.

Obsolescence notification periods should mandate written notice at least 180 days before last-time-buy dates for any program component. This lead time enables qualification of alternates without forcing expedited redesign cycles. Include provisions requiring the supplier to propose alternate components and fund any requalification testing their obsolescence choice necessitates.

Golden sample governance clauses define custody, re-baseline rules when changes occur, and dispute resolution processes when production units deviate from golden sample specifications.

Intellectual property clauses should clearly delineate ownership of design data, test procedures, and tooling. For ODM relationships where the supplier contributes design work, specify whether you own the complete design or only rights to use it. This distinction matters if you later want to transfer production to another supplier.

Warranty provisions should define failure analysis responsibilities, including who performs root cause investigation and who funds corrective action. Open-ended warranty exposure without clear failure analysis protocols creates disputes about whether field failures stem from design issues, manufacturing defects, or application conditions.

Implementation Checklist & Common Pitfalls

Before RFQ:

- Define acceptance thresholds for each gate

- Develop decision weighting model with stakeholder agreement

- Specify required documentation for quality system evaluation

- Create pilot exit criteria template

During Evaluation:

- Request actual CAPA logs, not quality manual excerpts

- Verify traceability through lot code mockup exercise

- Review compliance doc packages from completed programs

- Tour facility during production hours, not prepared demonstrations

Common Pitfalls:

- Undersizing pilots to save cost, then discovering process issues at volume

- Accepting vague thermal analysis (“adequate margins”) without specific calculations

- Starting tooling before DFM review closure and agreement on specifications

- Treating change control as bureaucratic overhead rather than risk management

- Evaluating suppliers only on unit cost, ignoring NRE, tooling, and warranty exposure

- Confusing NRTL marks as marketing logos rather than evidence packages maintained under ongoing surveillance

Using the Selection Map in Practice

The one-page Supplier Selection Map serves as a reference tool for vendor meetings and internal alignment. Before vendor calls, circulate the map so stakeholders understand gate artifacts and exit rules. This shared framework prevents the common pattern where different functional groups apply inconsistent evaluation criteria.

During review meetings, record evidence against each gate systematically. If an artifact is missing—say, thermal margin calculations or CAPA close-out reports—log an action with a due date rather than waiving the gate. The framework’s discipline lies in treating missing evidence as a stop condition, not a negotiation point.

After decisions, archive the scoring matrix and gate evidence as part of the program file. Link this documentation in the business case to maintain organizational memory. When similar decisions arise two years later, teams can reference what criteria mattered and why certain suppliers were selected or rejected.

Frequently Asked Questions

What makes an OEM amplifier supplier “qualified”?

Mature QMS with documented CAPA history, lot-level traceability, documented test plans with specific acceptance criteria, and proven pilot-to-ramp stability demonstrated through previous programs.

Which criteria carry the most weight in supplier selection?

QMS maturity and reliability test capability function as hard gates—suppliers without these fundamentals should be eliminated regardless of cost advantages. Commercial terms matter after capability screening.

How big should a pilot run be to predict mass-production reality?

Sufficient to exercise true production throughput rates and multiple shifts if volume production will span shifts. A ten-unit prototype build proves nothing about hundred-unit-per-week manufacturing capability.

How do we prevent timeline slip between RFQ and SOP?

Gate reviews with documented acceptance criteria, dependency mapping that identifies critical path items, tooling iteration buffers, and contractual change control SLAs with defined approval cycles.

What belongs in the contract to reduce warranty risk?

Change control SLAs, test data access rights with specified delivery frequency and formats, obsolescence notification periods, and clear failure analysis responsibility allocation with 8D turnaround commitments.

Where do BOM savings backfire?

When the cheapest qualified supplier lacks mature change control, leading to undocumented component swaps that introduce quality issues. Or when inadequate tooling buffers force acceptance of marginal tooling to hit schedule, creating yield problems at volume.

Next Steps

Implementing this framework requires translating principles into program-specific checklists and acceptance thresholds. Start by documenting your decision weighting model with stakeholder agreement before beginning supplier evaluation.

Ready to discuss your next amplifier program? Request a DFM audit or explore our amplifier manufacturing services for detailed capability information.

Disclaimer: This article provides general information about supplier selection frameworks for educational purposes. It does not constitute professional advice for your specific situation. Supplier selection involves complex technical, commercial, and legal considerations that vary by program requirements. Consult qualified professionals for guidance on your particular needs.

Our Editorial Process

We prioritize accuracy, clarity, and real-world usefulness. Articles are reviewed for structure, terminology consistency, and alignment with our B2B content guidelines before publishing.

About the China Future Sound Insights Team

We provide manufacturing governance frameworks and DFM guidance for audio and consumer electronics brands. Our content draws from established engineering practices and quality management principles to help product teams make informed sourcing decisions.