📌 Key Takeaways

A factory audit protects your brand only when you demand proof—not promises—before signing any supplier contract.

- Evidence Beats Impressions: Nice showrooms and current certificates mean nothing if you can’t watch staff pull a unit’s full build history on the spot.

- Focus on What Breaks Programs: The biggest failures come from quality slipping after the first run and suppliers quietly swapping parts—so design your questions around those two risks.

- “Show Me” Closes the Gap: Every time a supplier says “we can do that,” ask to see the record, log, or screenshot that proves they’ve actually done it before.

- Score and Compare Systematically: Use a simple 0/1/2 rubric across 50 questions—75+ points means proceed, 50–74 needs a fix-it plan, below 50 means walk away.

- Three Categories Predict Everything: If traceability, test calibration, and change control are weak, no corrective plan will protect your launch.

Audit for records, not reassurances—searchable proof is the only supplier promise that holds.

Product managers, sourcing leaders, and QA teams vetting amplifier OEM partners will gain a repeatable qualification method here, preparing them for the detailed 50-question framework that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

It’s 9:00 AM on a Monday. You open a supplier audit report that says “failed”—right as your launch timeline tightens. The showroom looked impressive. The samples sounded perfect. The certificates were current. And none of it predicted this.

A factory audit only protects your brand if it produces evidence—not impressions. Instead of asking “Can you build this?”, ask “Show me how you control variation when parts, operators, and lots change.” The questions below expose the two root causes behind most private-label failures: quality drift after the first run, and supply-chain opacity that enables silent component substitutions.

As you plan for the upcoming quarter’s new-SKU launches, supplier qualification becomes your highest-leverage risk-control step. This checklist gives you a repeatable method to convert supplier promises into auditable proof before you sign.

What This Checklist Is (And Who Should Use It)

This is a pre-contract qualification tool for OEM/ODM amplifier manufacturing services. Use it before signing a purchase order, not after your first production run ships with problems. The checklist supports a decision-maker, risk-mitigation posture—not operational execution.

Three stakeholder roles benefit most: Product Line Managers validating that the factory can hold spec at scale, Sourcing/Procurement leaders building a defensible supplier recommendation, and QA Leaders who need to verify process controls match the certificates on the wall.

Use it when:

- You’re vetting an OEM/ODM partner for a new amplifier SKU or platform, especially where small component or process shifts can create measurable—and sometimes audible—variation.

- You need to defend a supplier recommendation with artifacts (records, logs, calibration certificates, traceability lookups), not vibe checks.

- You’re auditing remotely and need “show me” evidence via live screen-share or photographed records, not narrative answers.

Most factory audits fail because they validate the wrong things. Touring the showroom, reviewing ISO certificates, and listening to a demo unit tells you almost nothing about what happens when parts change, operators rotate, or your program scales from pilot to volume. The questions here focus on evidence artifacts—records, logs, system screenshots—that prove the factory can maintain consistency when variables shift.

How to Use the Checklist During a Site Visit or Remote Audit

Every question follows the same structure: what to ask, what proof to request, and what answer should raise a red flag. The goal is evidence-first validation.

Scoring approach: Use a simple 0/1/2 rubric for each question.

- 0 = No evidence or vague answers (“We handle that” with nothing to show)

- 1 = Partial evidence or inconsistent process (some documentation, but gaps)

- 2 = Clear evidence with a repeatable system (records, logs, demonstrated capability)

What strong proof artifacts look like: Think in categories—IQC/IPQC/FQC logs with timestamps, FIFO evidence from ERP/WMS screens, test-route control via barcode or QR binding, calibration certificates with next-due dates, reliability lab logs, and reference-unit (golden sample) governance records.

Principles for Effective Audits

The highest-value audit time is spent at IQC stations, line control points, test areas, and quarantine zones—not the showroom. Brief your supplier in advance that you’ll request live demonstrations: a traceability lookup by serial number, a calibration certificate pull, and an ECO cut-in example. Close every audit with a “records drill”—pick one finished unit and ask for the full build/test history on the spot. If it’s not retrievable in real time, it’s not a dependable control.

Any time a supplier says “we can do that,” the follow-up is “show me the record, system, or log that proves you’ve done it.” Capability claims without evidence are marketing, not qualification.

For a complementary evaluation framework, see the Factory Evaluation for Amplifier Manufacturing: A Shareable Thirty-Point Checklist.

50 OEM Audit Questions, Grouped by Risk Category

The questions below organize into six categories that map directly to failure modes: quality system gaps, incoming material risks, process control weaknesses, test and calibration failures, traceability blind spots, and change control breakdowns.

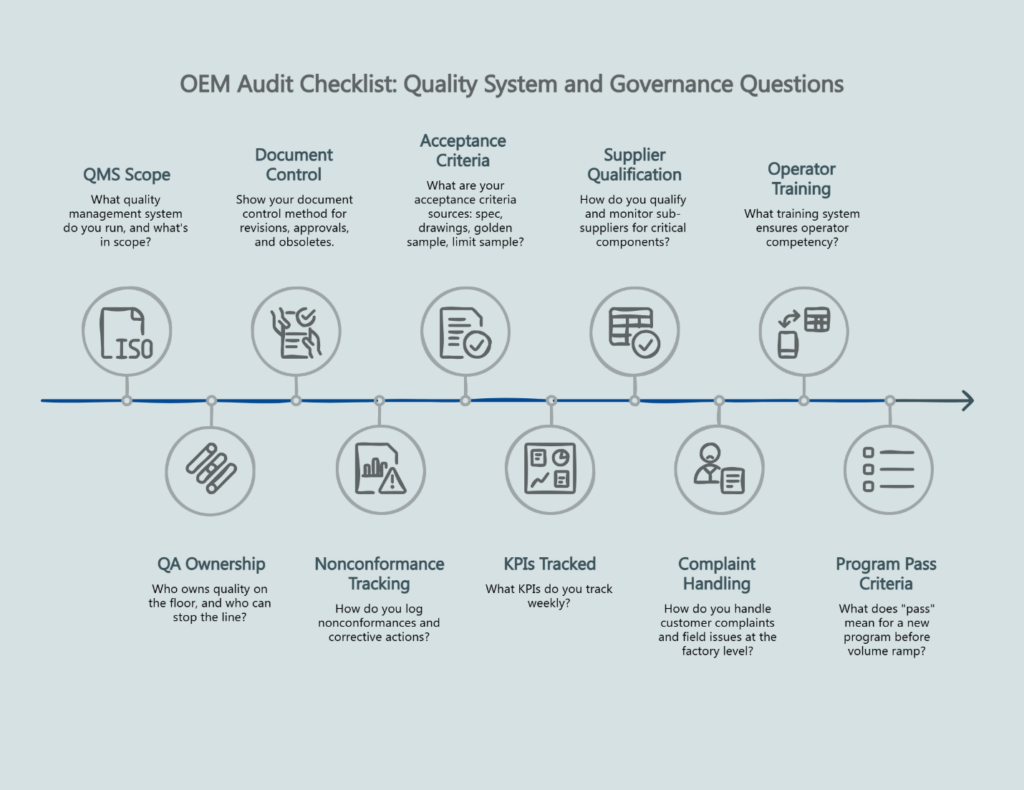

A. Quality System and Governance (Questions 1–10)

1. What quality management system do you run, and what’s in scope? Proof: Certificate scope plus current QMS document index; last internal audit summary. Red flag: “We have ISO” but no controlled document list or evidence of internal audits.

2. Who owns quality on the floor, and who can stop the line? Proof: Organization chart with named QA owner; documented escalation and line-stop authority. Red flag: QA exists only as “final inspection” with no in-process authority.

3. Show your document control method for revisions, approvals, and obsoletes. Proof: Controlled work instruction with revision history and approval signatures. Red flag: Uncontrolled PDFs, “latest version on WeChat,” or no revision tracking.

4. How do you log nonconformances and corrective actions? Proof: Last three NCRs with root cause analysis and closure evidence. Red flag: No closed-loop corrective action system; NCRs without follow-through.

5. What are your acceptance criteria sources: spec, drawings, golden sample, limit sample? Proof: Signed sample approval record; documented storage and labeling approach for reference units. Red flag: Acceptance is subjective (“sounds good”) with no measurable criteria.

6. What KPIs do you track weekly? Proof: Dashboard screenshot or weekly report showing FPY, scrap rate, rework rate, DPPM. Red flag: “We don’t track that” or metrics exist only for customer audits.

7. How do you qualify and monitor sub-suppliers for critical components? Proof: Approved vendor list with evaluation criteria; most recent supplier scorecard. Red flag: No supplier controls beyond price negotiation.

8. How do you handle customer complaints and field issues at the factory level? Proof: Complaint log template and a closed example with root cause analysis. Red flag: No systematic method for connecting field failures to production data.

9. What training system ensures operator competency? Proof: Training matrix with role-based requirements; sign-off records for key processes. Red flag: No documented training requirements or competency verification.

10. What does “pass” mean for a new program before volume ramp? Proof: Gate checklist with pilot run exit criteria and first-article acceptance requirements. Red flag: “Prototype approval is enough” with no formal production readiness review.

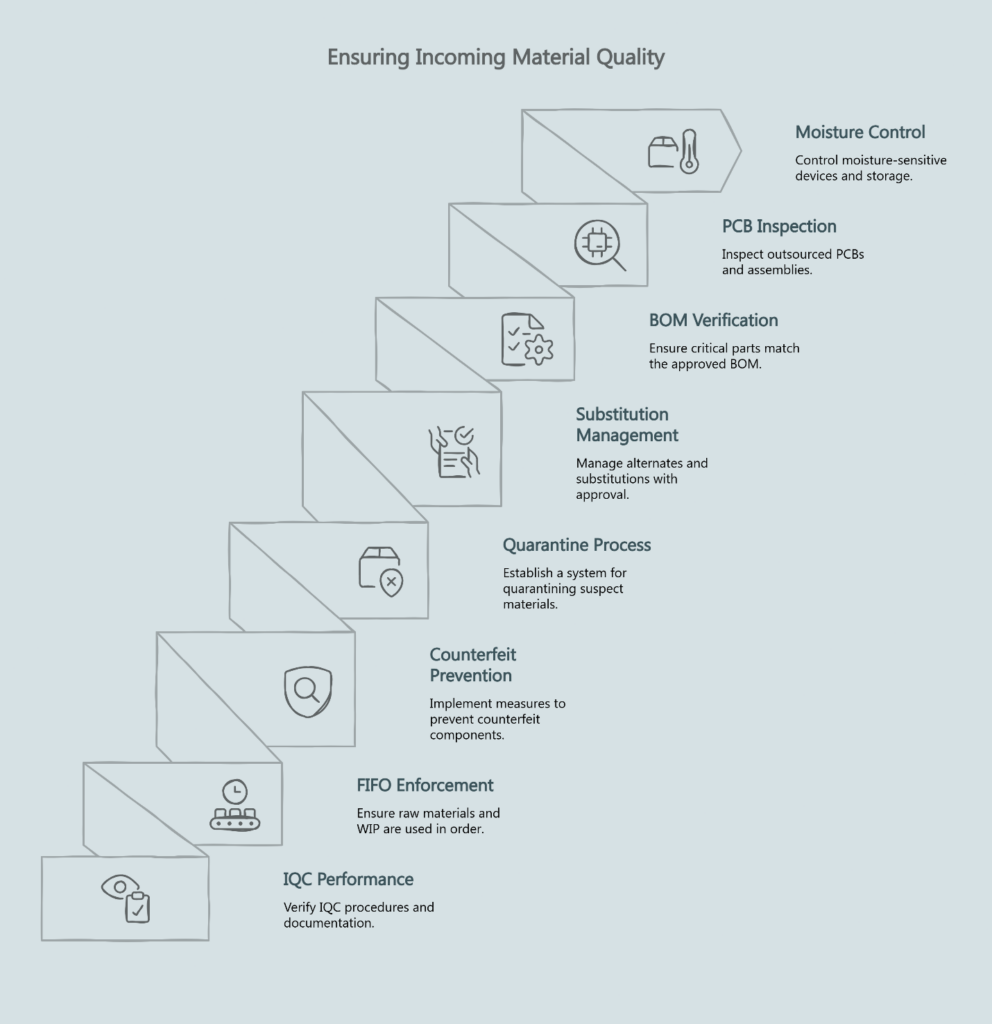

B. Incoming Materials and Supplier Control (Questions 11–18)

11. Where is IQC performed, and what do you inspect on inbound materials? Proof: IQC checklist with sampling plan; physical IQC area with active inspection records. Red flag: No dedicated IQC area or “we trust our suppliers.”

12. How do you enforce FIFO for raw materials and WIP? Proof: ERP/WMS workflow screenshot showing date-based material allocation. Red flag: “We manage it manually” with no system enforcement.

13. How do you prevent counterfeit or off-spec components? Proof: Supplier trace documents; lot-level labeling on incoming materials. Red flag: No lot-level tracking or component authentication process.

14. Show your material quarantine process for suspect or hold stock. Proof: Quarantine tags, segregated storage area, and disposition log. Red flag: Hold stock stored with good stock; no physical or system segregation.

15. How do you manage alternates and substitutions when parts are constrained? Proof: Substitution approval form requiring engineering and customer sign-off. Red flag: “We’ll swap if needed” without documented approval workflow.

16. How do you verify critical parts match the approved BOM? Proof: BOM control system with receiving verification checkpoints. Red flag: No BOM governance; parts accepted without BOM cross-reference.

17. What’s your incoming inspection for PCBs and assemblies if outsourced? Proof: Incoming PCB checklist with defect criteria and sampling rates. Red flag: “Our PCB supplier checks it” with no incoming verification.

18. How do you control moisture-sensitive devices and storage conditions? Proof: MSD storage log with humidity monitoring; MSL handling procedures. Red flag: No controlled storage for moisture-sensitive components.

C. Process Control and Workmanship (Questions 19–26)

19. Show the process flow for the line from kitting to pack-out. Proof: Flow chart with station list and operation sequence. Red flag: “We do it differently each time” or no documented process flow.

20. Where are in-process checks, and what do they verify? Proof: IPQC checklist at specific stations with acceptance criteria. Red flag: Inspection only at end-of-line; no in-process quality gates.

21. What’s the defined rework policy? Proof: Rework instruction with authorization rules and limits on rework cycles. Red flag: Unlimited rework with no tracking or disposition rules.

22. How do you prevent mixed builds when multiple revisions are active? Proof: Revision control at kitting stage; line clearance checklist between builds. Red flag: Parts for multiple revisions stored together; no changeover verification.

23. What’s your ESD control program? Proof: ESD audit records; wrist-strap tester logs; grounding verification. Red flag: No visible ESD discipline or “we’re careful.”

24. How are fixtures and jigs maintained and validated? Proof: Fixture maintenance log with calibration schedule. Red flag: Ad hoc “fix when broken” approach with no preventive maintenance.

25. How do you manage torque, adhesive, and glue process control? Proof: Specification with verification method (torque charts, cure time verification). Red flag: No measurable controls; “operators know what’s right.”

26. How do you handle line changes between models? Proof: Changeover checklist with first-piece verification record. Red flag: No formal changeover process; production starts without verification.

D. Test, Calibration, and Reliability (Questions 27–36)

27. What tests are performed at end-of-line, and what’s the pass/fail criteria? Proof: Test plan with limits table and measurement points. Red flag: “We listen to it” or subjective pass/fail determination.

28. Show calibration records for critical test equipment. Proof: Calibration certificates for Audio Precision, KLIPPEL, or equivalent systems; calibration schedule with next-due dates. Red flag: No calibration evidence; expired certificates; “the equipment is new.”

29. Who owns test limits, and how are they changed? Proof: Limits revision record with approval signatures and change history. Red flag: Technicians can modify limits without engineering approval.

30. How do you validate test repeatability and measurement system stability? Proof: Repeatability check log or Gage R&R study results. Red flag: No concept of measurement system analysis; assumed accuracy.

31. What happens when a unit fails test? Proof: Failure workflow showing containment, disposition, and re-test rules. Red flag: Failed units re-enter the line without diagnosis or tracking.

32. Do you run aging, burn-in, or stress screening? Proof: Aging record template with duration, conditions, and sample log. Red flag: “Not needed” without technical rationale; no reliability screening.

33. Do you have a reliability lab, and what’s tested? Proof: Reliability test plan with periodic test records (thermal cycling, power testing). Red flag: No reliability evidence; “we test at qualification only.”

34. How do you verify performance consistency versus reference units? Proof: Golden sample storage protocol with controlled access and sign-off method. Red flag: Reference units not controlled; golden samples used for demos.

35. How do you validate firmware and software versions at pack-out? Proof: Version capture record showing firmware verification per unit or batch. Red flag: Version tracking is informal; “we flash the latest.”

36. Can you show defect Pareto data for the last three months? Proof: Pareto chart or defect summary with failure mode ranking. Red flag: No structured defect analysis; issues handled case-by-case.

E. Traceability and Data Access (Questions 37–44)

37. What’s the unique unit identifier, and when is it assigned? Proof: Serial number or QR code policy with assignment point in process flow. Red flag: Serials assigned after the fact; batch-only identification.

38. Can you pull the full test history for a specific serial number on demand? Proof: Live lookup demonstration during the audit. Red flag: “We’ll find it later” or data retrieval requires manual search.

39. Can you pull test data for a unit shipped six months ago? Proof: Historical lookup demonstration with actual shipped serial. Red flag: Data retention unclear; “we keep records for a while.”

40. What does the traceability log capture? Proof: Sample log screenshot showing component lot, operator ID, test timestamp, station. Red flag: Traceability is batch-only with no unit-level binding.

41. How do you bind critical component lots to units? Proof: Scan points in process flow showing component-lot capture and unit binding. Red flag: No component-lot capture; “we track by purchase order.”

42. How do you control traveler records and prevent tampering? Proof: System access controls and audit trail for production data. Red flag: Editable spreadsheets; paper travelers without version control.

43. Who can access and modify production data? Proof: Role-based permissions model with audit logging. Red flag: No access controls; anyone can edit records.

44. What’s your data retention policy and export format for customers? Proof: Documented retention policy with customer export examples. Red flag: “We don’t share data” or undefined retention period.

F. Change Control and Program Management (Questions 45–50)

45. How are ECOs raised, reviewed, and approved? Proof: ECO form template and last approved engineering change order. Red flag: Changes happen informally; no cross-functional review.

46. How do you define cut-in and prevent mixed builds? Proof: Cut-in plan example showing serial number, date, or lot boundary. Red flag: “We’ll use up old stock” with no segregation or tracking.

47. What happens to old stock when an ECO is cut in? Proof: Disposition record showing scrap, rework, or use-as-is decision with approval. Red flag: No formal disposition; old and new parts mixed.

48. Who owns program timelines and cross-functional coordination? Proof: Named program owner with weekly cadence documentation. Red flag: No single accountable owner; coordination is ad hoc.

49. What’s your containment plan when defects appear during ramp? Proof: Containment playbook with roles, time-to-contain targets, and customer communication protocol. Red flag: “We’ll see when it happens” with no pre-defined response.

50. What must be true before you consider SOP ready for volume? Proof: Production readiness checklist with sign-offs from engineering, quality, and operations. Red flag: SOP timing is calendar-driven only; no readiness gates.

How to Interpret Answers: Red Flags Versus Green Flags

Think in failure modes, not friendliness. The most revealing audit question is rarely “What equipment do you have?”—it’s “Show me the record that proves you can reproduce the same result six months later.”

Red flags that signal systemic risk:

- No calibration records or expired certificates for test equipment

- Paper-only travelers with no digital backup or audit trail

- Unbounded rework with no cycle limits or disposition rules

- No ECO cut-in discipline; changes happen without serial/lot boundaries

- Traceability exists “somewhere” but can’t be demonstrated live

- Golden samples used for customer demos instead of controlled storage

Green flags that indicate process maturity:

- Barcode or QR binding of test data to individual unit serial numbers

- Documented IQC, IPQC, and FQC gates with acceptance criteria

- Reliability lab logs showing periodic testing against design requirements

- Controlled FIFO via ERP/WMS with system-enforced date sequencing

- ECO workflow with defined cut-in boundaries and old-stock disposition

- Live traceability lookup during the audit—not promised for later

ISO 9001:2015 certification supports a documented quality management system, but certification alone does not replace on-site evidence of execution. The certificate proves the system exists on paper; your audit proves it works on the floor.

A practical way to decide: If the factory is strong on Traceability, Test/Calibration, and Change Control, you can often work through weaker areas with a corrective plan. If they’re weak on those three categories, you’re betting your brand on hope.

A Simple Audit Scorecard

Use this scorecard framework to structure your site visit or remote audit. Score each category using the 0/1/2 rubric, then calculate category and overall totals.

Scoring categories:

| Category | Questions | Max Score |

|---|---|---|

| Quality System & Governance | 1–10 | 20 |

| Incoming Materials & Supplier Control | 11–18 | 16 |

| Process Control & Workmanship | 19–26 | 16 |

| Test, Calibration & Reliability | 27–36 | 20 |

| Traceability & Data Access | 37–44 | 16 |

| Change Control & Program Management | 45–50 | 12 |

| Total | 50 | 100 |

Decision thresholds:

- Pass (75+ points): Strong evidence across all categories, particularly in Traceability, Test/Calibration, and Change Control. Proceed to pilot run with contract terms that lock golden sample approval and change control governance.

- Conditional (50–74 points): Gaps exist but are addressable. Require a corrective action plan (CAPA) for weak categories and schedule a re-audit checkpoint before volume commitment.

- Fail (below 50 points): Missing fundamental controls in traceability, calibration integrity, or change management. Do not proceed without major remediation.

For each question, note the document ID, screenshot reference, or photo that supports your score. This evidence protects your recommendation when presenting to stakeholders.

For additional context on the OEM/ODM manufacturing process, including how design handoff connects to production readiness, see Understanding the OEM/ODM Manufacturing Process: From Your Design to a Shipped Product.

Next Steps After the Audit

If the supplier passes: Move to pilot run negotiations. Your contract terms should explicitly lock golden sample approval as the production reference, require customer notification and approval for any component substitutions, define ECO cut-in boundaries (serial number or lot), and specify data access and retention requirements.

If the result is conditional: Document the specific gaps and request a CAPA (Corrective and Preventive Action) plan with timelines. Schedule a re-audit checkpoint—typically 60–90 days—to verify remediation before committing to volume orders.

If the supplier fails: The gaps are too fundamental to address with a CAPA. Look for alternative partners or require a complete quality system overhaul before re-engaging.

Quality drift after the first run is the silent failure mode in private-label audio. Traceability turns supplier accountability from promises into searchable records. Change control—specifically ECO cut-in discipline—is where “mixed build” disasters start.

Suppliers at China Future Sound operate with ISO 9001:2015 certification, multi-stage inspection (IQC/IPQC/FQC), KLIPPEL QC testing with golden sample management, and barcode/QR traceability that binds test data to individual units. Explore our pro audio and car audio production capabilities to see how these controls apply to specific product categories.

References

- ISO 19011:2018 — Guidelines for auditing management systems

- ISO 9001:2015 — Quality management systems — Requirements

- OSHA — Nationally Recognized Testing Laboratory (NRTL) Program

- NIST SP 800-161 Rev. 1 — Cybersecurity Supply Chain Risk Management Practices

Want more manufacturing due-diligence tools like this? Subscribe to our newsletter for practical checklists and launch-risk frameworks.

Evaluating an OEM/ODM amplifier partner? Get in touch to discuss your requirements and qualification plan.

Disclaimer: This article is for informational purposes and should not replace professional advice. Audit requirements vary by industry, product category, and regulatory environment.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the China Future Sound Insights Team:

The China Future Sound Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.