📌 Key Takeaways

Cross-functional supplier debates end when Product, Procurement, and Quality commit to explicit criteria and weightings before any vendor conversation begins.

- Document Before Debating: A decision weighting matrix with pre-set criteria blocks, weights, and acceptance thresholds prevents anecdotal persuasion from overriding hard evidence during supplier reviews.

- Total Landed Cost Reveals True Economics: Unit price alone hides NRE, tooling, logistics, rework, and warranty exposure—costs that often dwarf the difference between competing BOM quotes.

- QMS Maturity Gates the Program: ISO 9001 evidence, CAPA histories, traceability protocols, and reliability test summaries are non-negotiable checkpoints that predict mass-production stability better than proposals.

- Pilots Predict Production Reality: A 200–500 unit pilot with objective exit criteria for yield, stress testing, and change control exposes process weaknesses before they become costly ramp failures.

- Time-Boxed Workshops Create Commitment: A 90-minute facilitation session with silent scoring, tie-break rules, and conflict logging converts abstract preferences into a shared decision model the entire committee defends.

Explicit frameworks turn circular debates into transparent trade-offs, reducing post-decision conflict and accelerating program launches.

Product management leads, procurement managers, and quality executives at audio and consumer electronics brands will gain a practical alignment framework here, preparing them for the detailed decision model and gate flow that follows.

The conference room has gone quiet again. Product wants proven reliability data. Procurement points to the unit-price spreadsheet. Quality raises concerns about compliance documentation. Everyone believes they’re right—and in isolation, they are. The challenge isn’t that any single function lacks valid priorities; it’s that without a shared decision model, cross-functional teams cycle through the same debates at every vendor review.

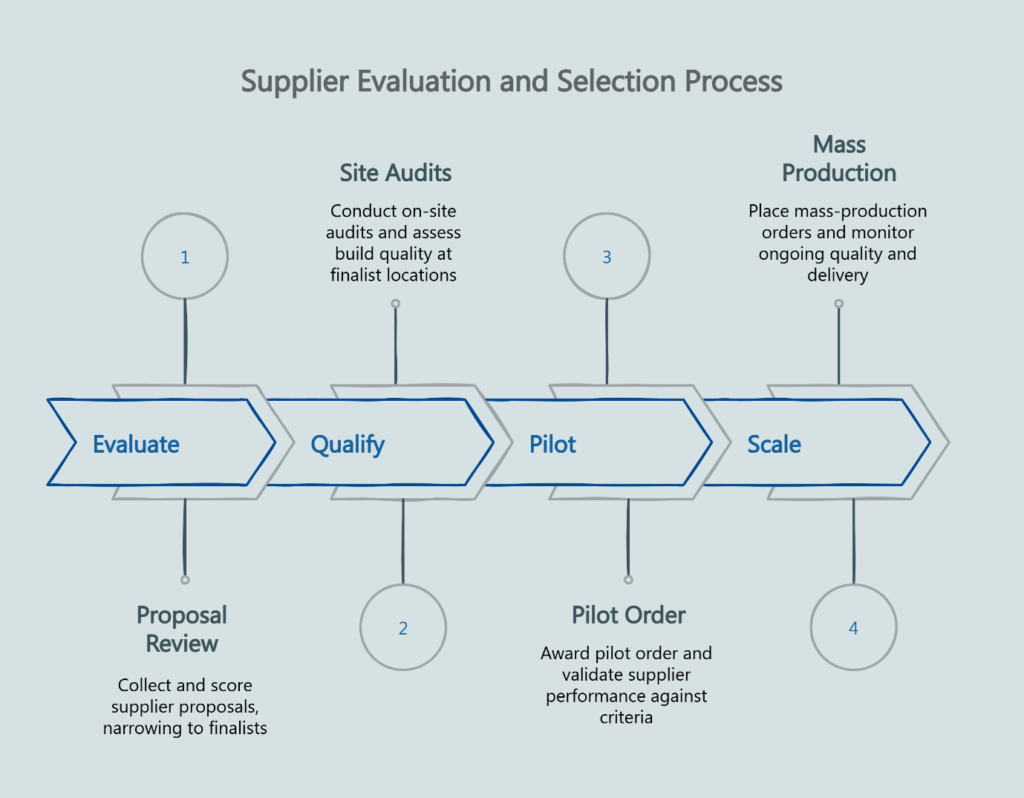

An OEM amplifier supplier is a contract manufacturing partner with engineering, test, and quality management system (QMS) capability to design and produce amplifiers to your brand’s requirements. Think of it like hiring a dedicated pit crew for your amplifier line—you need speed, reliability, and coordination under pressure. Pilots pass initial inspection, but mass production stumbles when QMS gates and change control processes aren’t mature. The path forward requires a documented framework: Evaluate → Qualify → Pilot → Scale, with continuous audits of QMS maturity, reliability testing, compliance readiness, and change control discipline.

“A documented model ends circular debates.”

A documented decision model ends circular debates across Product and Procurement

Alignment fails without a documented decision model and weightings. When criteria remain implicit, each stakeholder interprets “quality” or “risk” through their functional lens, leading to endless re-litigation of the same points.

Define criteria, weights, and acceptance thresholds before vendor meetings

Set the rules before conversations begin. A decision weighting matrix forces the buying committee to articulate what matters most—and by how much—before any supplier pitches their capabilities. This pre-commitment prevents the loudest voice from dominating in the moment and creates accountability for the final choice.

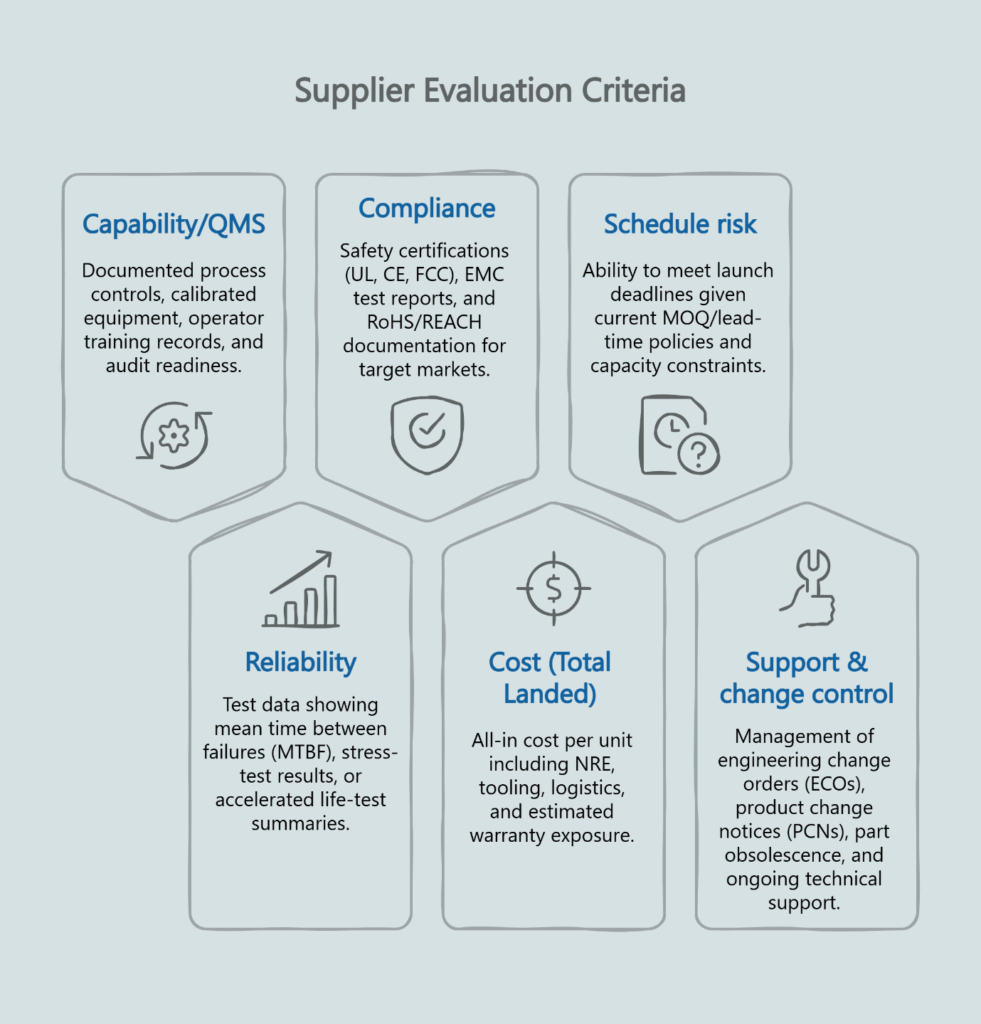

The matrix should capture six criteria blocks: Capability/QMS, Reliability, Compliance, Cost (total landed cost), Schedule risk, and Support & change control. Each block receives a weight reflecting its importance to this specific program. If your product targets safety-critical markets, compliance might carry 25% of the total weight. If launch timing is non-negotiable, schedule risk might deserve 20%.

Make QMS maturity and reliability data non-negotiable gate checks

QMS maturity and reliability data are non-negotiable gates. A supplier’s proposal matters less than their documented track record of process control and test discipline. Request evidence of ISO 9001 quality management systems, corrective and preventive action (CAPA) histories, lot traceability procedures, incoming inspection protocols, and summaries from highly accelerated life testing (HALT) or highly accelerated stress screening (HASS) programs.

Suppliers without mature QMS infrastructure will struggle when production volumes ramp. The risk isn’t always catastrophic failure—it’s often subtle yield degradation, inconsistent parametric performance, or delayed root-cause analysis when issues do emerge. A factory evaluation checklist can help you assess these capabilities systematically during site visits.

Total landed cost beats unit price in real decisions

Procurement teams face pressure to reduce bill-of-material (BOM) costs, but focusing solely on unit price creates hidden exposure downstream. Total landed cost must include non-recurring engineering (NRE) fees, tooling amortization, test fixtures, logistics, rework labor, and warranty reserve estimates.

Include NRE, tooling, fixtures, logistics, rework, and warranty exposure in the model

A supplier quoting $45 per unit with $50,000 in NRE and tooling costs the same over a 10,000-unit program ($500,000 total) as a $48 supplier with $20,000 in setup fees. The seemingly higher unit price is offset by lower setup costs, illustrating why total landed cost analysis is essential.

- NRE: Design support, test program development

- Tooling/fixtures: Custom jigs, ATE updates, production fixtures

- Logistics: Freight, duties, insurance, warehousing

- Rework/scrap: Yield-related costs during ramp

- Warranty exposure: Expected returns and field-failure costs

Add potential rework costs if DFM inputs that affect yield weren’t addressed early, and the true cost gap widens further. Warranty exposure is particularly difficult to model without historical data, but it’s also where low-quality suppliers create the most financial damage. A 2% return rate sounds modest until you calculate the cost of reverse logistics, depot repair labor, customer service overhead, and brand reputation impact.

Visualize trade-offs as weighted scores, not anecdotes

Anecdotal justifications—”Supplier A seems really responsive”—don’t survive scrutiny when the program hits trouble six months later. A weighted scorecard converts subjective impressions into comparable metrics. Each criterion receives a 1–5 score per supplier, multiplied by its assigned weight, producing a total that reflects the committee’s stated priorities.

This approach doesn’t eliminate judgment, but it makes judgment transparent. When Supplier B scores higher on reliability but lower on cost, the matrix shows exactly how much reliability the team is purchasing and at what price premium. That clarity helps everyone defend the decision later, especially when explaining to executives why the lowest-cost bidder wasn’t selected. Explicit weighting frameworks help reduce post-decision conflict by making trade-offs visible and creating shared accountability for complex decisions.

Pilot-run outcomes predict mass-production stability better than proposals

Pilot-run outcomes predict mass-production stability more than proposals do. A supplier’s engineering deck might showcase impressive test equipment and process documentation, but only a pilot reveals how their systems perform under real program constraints.

Set pilot size, yield targets, and stress tests that mirror line reality

A 50-unit pilot with relaxed acceptance criteria teaches you very little about what happens at 5,000 units per month. Set pilot quantities large enough to expose process variation—typically 200–500 units for mid-volume electronics programs, though the appropriate size depends on your target production volumes, product complexity, and the statistical confidence level required for yield validation. Define first-pass yield targets that match your mass-production expectations, not aspirational goals.

Stress testing during pilots is equally important. Run thermal cycling, power cycling, and vibration tests that represent your product’s operating environment. Suppliers with weak process control often pass initial functional tests but fail when units experience real-world stress.

Use objective exit criteria to advance from Pilot → MP

Advancing to mass production (MP) should require passing explicit gates, not optimistic assurances that issues will be “worked out in production.” Define exit criteria for first-pass yield, parametric test margins, cosmetic defect rates, and documentation completeness before the pilot begins. When a supplier falls short, the decision to proceed or re-run becomes data-driven rather than political.

This discipline mirrors the first-article approval process used in automotive and aerospace industries, where objective acceptance criteria prevent premature production releases that later require costly corrections. NIST manufacturing quality resources provide additional guidance on establishing reliability gates and validation protocols for electronics manufacturing.

Run a 90-minute alignment workshop to set weights and gates

Time-boxed facilitation converts abstract preferences into concrete commitments. A focused 90-minute session can produce a decision model that all stakeholders commit to using.

Pre-work: collect QMS artifacts, reliability summaries, and DFM notes

Productive workshops require preparation. Before the session, assign each function to gather key inputs. Product collects DFM notes and specification requirements. Procurement gathers cost breakdowns and lead-time policies. Quality assembles QMS certifications, CAPA summaries, and reliability test data from prospective suppliers.

Walking into the workshop with these artifacts ensures discussions remain evidence-based rather than speculative. When Quality claims a supplier lacks traceability, they can point to specific documentation gaps rather than general impressions.

Live: voting, tie-break rules, and conflict logging

During the live session, structure the voting process to reduce groupthink. Begin with a silent, independent scoring round where each participant assigns their preferred weights to the six criteria blocks. Reveal all scores simultaneously, then discuss significant gaps before converging on final weights.

When consensus isn’t possible, define a tie-break rule in advance—perhaps the program manager holds the deciding vote, or Quality/Compliance holds veto power on QMS threshold failures. You might also agree to escalate only if disagreement exceeds 10 percentage points.

Log unresolved conflicts in meeting notes. If Procurement strongly objected to a 15% weight on Schedule risk, document that objection. This record protects everyone if the decision later proves problematic, and it ensures dissenting views aren’t dismissed or forgotten.

Your Decision Weighting Matrix is the committee’s single source of truth

The matrix becomes the artifact that governs supplier selection. Once built, it serves as the reference point for all evaluation discussions.

Criteria blocks and weights

The six blocks cover the full spectrum of supplier evaluation:

- Capability/QMS: Does the supplier have documented process controls, calibrated equipment, operator training records, and audit readiness?

- Reliability: Can they provide test data showing mean time between failures (MTBF), stress-test results, or accelerated life-test summaries?

- Compliance: Do they maintain safety certifications (UL, CE, FCC), EMC test reports, and RoHS/REACH documentation for target markets?

- Cost (Total Landed): What is the all-in cost per unit including NRE, tooling, logistics, and estimated warranty exposure?

- Schedule risk: Can they meet launch deadlines given current MOQ/lead-time policies and capacity constraints?

- Support & change control: How do they manage engineering change orders (ECOs), product change notices (PCNs), part obsolescence, and ongoing technical support?

Assign each block a weight (totaling 100%) based on program priorities. A consumer electronics launch with aggressive timelines might weight Schedule risk at 25%, while a professional audio product targeting safety-critical applications might weight Compliance at 30%.

Acceptance thresholds and scoring

Beyond weights, define minimum acceptance thresholds for critical criteria. A supplier might score well overall but fail a non-negotiable gate—for example, lacking ISO 9001 certification or unable to provide reliability test data. Flag these thresholds clearly in the matrix so evaluators know when a supplier is disqualified regardless of their total score.

Score each supplier on a 1–5 scale for every criterion, where 1 indicates significant gaps and 5 represents best-in-class capability. Multiply each score by its weight to produce a weighted score per criterion, then sum across all criteria for a total score per supplier.

Objection handling: this framework derisks schedule and warranty cost

When introducing this framework to stakeholders accustomed to simpler evaluation methods, anticipate two common objections.

“Lowest BOM wins” ignores rework/returns and schedule slips

Procurement teams often face aggressive cost-reduction targets, leading to a default preference for the lowest unit price. That strategy works when all suppliers offer equivalent quality, reliability, and support—but in practice, they rarely do.

The lowest-cost supplier typically achieves that position through reduced test coverage, minimal QMS overhead, or constrained engineering support. Those gaps don’t show up in the initial BOM, but they manifest as higher rework costs when yields fall short, increased warranty returns when reliability proves inadequate, and schedule delays when engineering issues aren’t resolved quickly.

Reframe the conversation from “lowest cost” to “lowest total cost of ownership.” The decision weighting matrix makes this shift tangible by quantifying the trade-offs between unit price and other risk factors.

“We can skip pilots” increases ramp risk and failures

When launch schedules are tight, teams sometimes propose moving directly from design validation to mass production, skipping the pilot phase entirely. This approach occasionally succeeds with very simple products and highly mature suppliers, but it dramatically increases ramp risk for anything involving custom amplifier designs, new test protocols, or suppliers without a proven track record with your brand.

Pilots serve two purposes: they validate that the supplier’s process can meet your requirements at scale, and they surface integration issues between your design and their manufacturing capabilities. Skipping pilots doesn’t eliminate those issues—it just moves their discovery to mass production, where the cost and schedule impact are far more severe.

Gate flow at a glance

The evaluation process follows four sequential gates, each with specific deliverables:

Evaluate → Collect supplier proposals, QMS documentation, reliability summaries, cost breakdowns, and references. Score each supplier using the decision weighting matrix. Narrow the field to 2–3 finalists.

Qualify → Conduct site audits at finalist locations using a factory evaluation checklist. Verify that documented processes match actual practice. Request sample builds or existing product teardowns to assess build quality. Confirm capacity, lead times, and MOQ policies.

Pilot → Award a pilot order to the selected supplier with explicit acceptance criteria for yield, test margins, cosmetic quality, and documentation. Run stress tests and design validation on pilot units. Assess the supplier’s responsiveness to feedback and ability to implement corrections. Gate decision: proceed to MP only if pilot meets all exit criteria.

Scale → Place mass-production orders with ongoing monitoring of quality metrics, delivery performance, and change control discipline. Schedule periodic audits to verify continued QMS compliance. Maintain open communication channels for technical support and issue escalation.

Implementing the Framework: From Workshop to Ongoing Governance

The decision weighting matrix is available as a one-page PDF that your buying committee can use immediately. The template includes preset criteria blocks, weighting guidance, and scoring instructions. Customize it for your program’s specific priorities, then use it to drive objective supplier evaluations.

If your team would benefit from facilitation support or wants to discuss how this framework applies to your next amplifier program, talk to our team about your requirements.

Disclaimer: This article provides general information about aligning Product Management and Procurement around OEM amplifier supplier selection for educational purposes. Individual circumstances vary significantly based on target regulatory markets, MOQ/lead-time policy, BOM strategy, pilot-run scope and acceptance metrics, QMS maturity, and reliability test planning. For guidance tailored to your buying-committee’s alignment needs, consult a qualified professional.

Our Editorial Process

We prioritize accuracy, clarity, and real-world usefulness. Articles are reviewed for structure, terminology consistency, and alignment with our B2B content guidelines before publishing.

About the China Future Sound Insights Team

The China Future Sound Insights Team distills complex manufacturing topics into clear, helpful guides. Reviewed for clarity and accuracy; informational only, not a substitute for professional advice.