📌 Key Takeaways

Procurement and QA teams protect the same program better when they agree on what counts as proof from a supplier.

- Agree on What “Ready” Means: When both teams use different standards for “ready,” suppliers can pass one check and fail the other — and nobody catches it until launch.

- Track Every Unit, Not Just Batches: QR-code tracing at each test station lets both teams prove which checks happened on which units, replacing trust with evidence.

- Lock the Golden Sample: The approved reference sample needs documented custody, storage rules, and automatic re-testing whenever parts or processes change.

- Test for Containment Before You Need It: The real question before launch isn’t “Will anything go wrong?” — it’s “Can we pinpoint and isolate the problem fast if it does?”

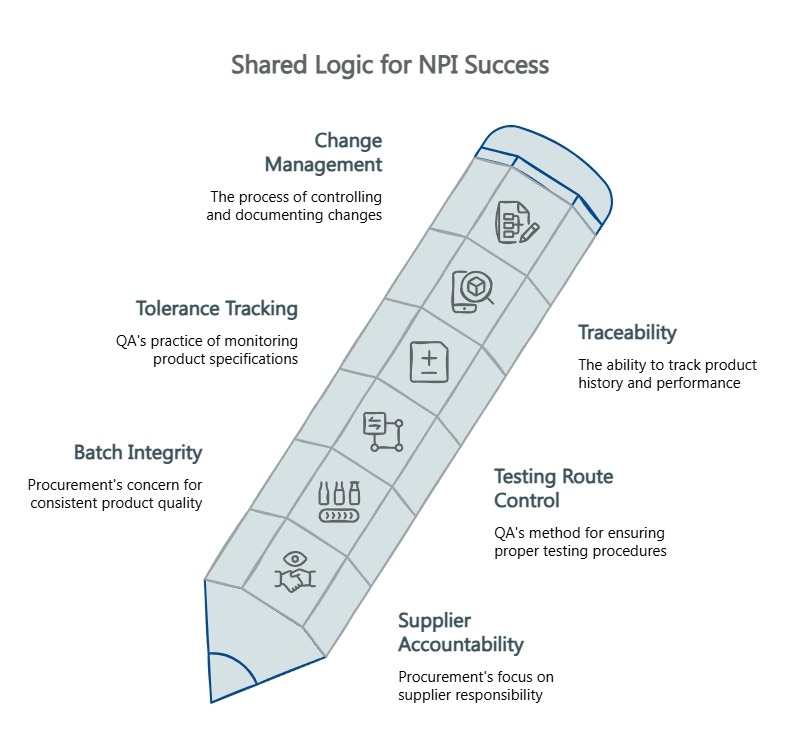

- Speak the Same Language: QA’s “testing route control” and Procurement’s “supplier accountability” mean the same thing — build a shared vocabulary so alignment doesn’t get lost in translation.

Shared proof standards turn internal friction into launch-day confidence.

Program owners, sourcing leads, and QA managers running private-label supplier approvals will find a ready-to-use gate structure and evidence checklist here, preparing them for the detailed framework that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

The conference room splits down the middle.

On one side of the table, Procurement has supplier lead times open on a laptop, a shortlist nearly final, and a launch window that won’t move. On the other, QA has a folder of test reports that raise more questions than they answer — tolerance data that looks thin, a golden sample approval process nobody can quite explain, and zero evidence that what ships at scale will match what passed in the lab.

Both teams are looking at the same supplier. Both are protecting the program. The friction appears because each team is protecting it with a different evidence model. Procurement is optimizing for execution confidence: Can this vendor deliver on time, at spec, without surprises? QA is optimizing for verification depth: Can this vendor prove that production units will replicate the approved design — not just on day one, but on day three hundred?

Both questions are legitimate. The problem is not that these teams disagree. The problem is that they disagree about what counts as proof.

When that disagreement goes unresolved, you get false alignment — a handshake based on different definitions of “ready.” A supplier may appear ready because lead times seem workable, communication is responsive, and the first sample passes a review. Yet none of that proves that test steps will be enforced at scale, that the golden sample will remain the locked reference, or that later defects can be isolated without turning a contained issue into a broad program problem. Neither team has confirmed that the approved design will survive translation into mass production. That gap is where spec-drift, warranty exposure, and late-stage surprises live.

This framework provides the fix: a shared evidence standard and reusable structure for evaluating supplier proof before you commit to scale.

The Shared Logic: What Both Teams Actually Need From a Supplier

Procurement and QA don’t need identical priorities. They need identical proof standards.

Strip away the departmental lens, and both teams depend on the same thing: documented, repeatable, auditable evidence that the approved design survives the transition from sample to production. Procurement calls this supplier accountability and batch integrity. QA calls this testing route control and tolerance tracking. The language differs. The need does not.

Traceability is where technical rigor converts into business confidence. When every unit carries a bound record of which test stations it passed, which QC gates it cleared, and which golden sample it was measured against, Procurement gains a verifiable accountability trail and QA gains proof that the factory didn’t skip steps under production pressure. QR-code production traceability — binding individual unit performance data to a unique identifier at every QC gate — becomes the common language that lets both functions evaluate launch-readiness together. That convergence, unit-level data serving both verification and accountability, is the shared logic this framework is built on.

Guidance from the National Institute of Standards and Technology—including NIST SP 800-161—reinforces this point: end-to-end traceability improves provenance verification and supply-chain integrity. In a private-label program, that means both QA’s obligation to verify performance and Procurement’s obligation to protect against containment risk.

The same logic extends to change handling. ISO/TC 176 guidance on ISO 9001:2015 emphasizes risk-based thinking, documented information, and disciplined change management because stable output depends on controlled variation rather than informal updates. The practical rule is direct: undocumented changes create invisible risk. If a component substitution or process revision happens without a traceable record, the golden sample becomes irrelevant and the production baseline shifts without either Procurement or QA knowing it moved.

For related context, see a shared framework for predictable NPI, why component drift ruins mass-production QA, and mass-production spec-drift.

A Four-Gate Alignment Model for New Product Introduction

A gated approach to NPI is well established — the AIAG Advanced Product Quality Planning (APQP) 3rd Edition manual codifies how gated quality planning aligns sourcing, change management, and traceability. The AIAG Quality Core Tools framework describes how APQP, Control Plans, and SPC work together to make systematic assurance operational. What most private-label programs lack is a shared definition of what evidence each gate requires and which team owns which decision.

The following model gives both functions a common checkpoint sequence.

Gate 1: Design Intent and Verification Basis

The question both teams answer: Do we agree on what we’re building and how we’ll know it’s right?

This gate locks the design specification and the verification method. QA confirms that the performance parameters have a defined test protocol — not just a target number on a spec sheet, but a documented method for measuring it. Procurement confirms that the specification is producible at the target volume without requiring heroic engineering workarounds. If design intent is vague, every later discussion becomes harder to govern.

For a private-label audio program, useful proof may include simulation logic, sample validation methods, and the reason those methods were chosen. Finite-element simulation, KLIPPEL R&D testing, destructive power tests, and long-term power tests are examples of evidence categories that connect design theory to measurable verification.

The artifact that exits this gate is a shared design-intent document reviewed by both functions.

Gate 2: Golden Sample and Handoff Control

The question both teams answer: What becomes the locked reference, and who controls custody?

The golden sample is the physical benchmark against which all production units are measured. In well-run programs, this sample undergoes validation through simulation, destructive testing, and long-term power testing before it earns its status. Once approved, custody must be documented — who holds it, how it’s stored, and what triggers re-validation.

A strong first article is helpful. A controlled first article is far more valuable. A golden sample without governance can drift out of relevance. A controlled sample creates a fixed point for later comparison, which is why golden sample integrity and first-article approval for amplifiers are central to whether launch-readiness means anything.

This is where programs often break down. Gate 2 closes only when golden sample governance is documented — including custody protocol with storage conditions and triggered re-qualification events, typically after any engineering change or component substitution.

Gate 3: Route-Controlled Production Proof

The question both teams answer: Can the factory prove that every required test step actually happened?

Route control means that each unit’s progression through the production line is tied to logged pass/fail records at each QC station — IQC for incoming materials, IPQC during production, and FQC at final inspection. Barcode or QR-code binding ensures that test data is traceable to the individual unit, not just the batch. The system blocks advancement if a unit hasn’t logged a pass at the previous gate.

Without route control, a supplier can claim “all units tested” without proving it. With it, both teams can audit the production record for any specific unit at any time — the difference between supplier assurance and supplier evidence.

For deeper context, see testing route control and proof your OEM/ODM amp line is production-ready.

Gate 4: Launch-Readiness and Containment Readiness

The question both teams answer: If something goes wrong at scale, can we isolate the problem without pulling the entire inventory?

The right question at this gate is not whether all risk has vanished. The right question is whether the remaining risk is visible, bounded, and manageable. Launch readiness is not just about confirming the first run looks good. It’s about confirming containment capability — the ability to trace a defect to a specific component lot, production date, or test station and isolate affected units without pulling the entire inventory. Component-level data is what makes targeted containment possible rather than undifferentiated batch-level response.

Gate 4 closes when both teams have reviewed the supplier’s corrective-action readiness: How fast can they identify root cause? How granular is their component-level data? Can they demonstrate that their traceability system supports targeted containment?

The prevention-versus-failure logic from the ASQ Cost of Quality framework applies here directly. Investment in prevention and appraisal protects against the far higher expense of field failures. Prevention and appraisal are not administrative extras — they are the disciplines that keep internal and external failures from doing the real damage later.

The Program Alignment Toolkit

The following tools are designed for internal champions — the program owner, sourcing lead, or QA manager who needs to bring both functions to a shared standard before supplier approval. Each component translates a technical proof requirement into business-readable decision language.

Stakeholder Alignment Matrix

| Supplier Evidence Area | What QA Needs to See | What Procurement Needs to See | Shared Proof That Satisfies Both |

|---|---|---|---|

| Design Verification | Documented test protocol with pass/fail criteria tied to spec | Confirmation that spec is producible at target volume | Signed design-intent document with test method and DFM review |

| Sample Governance | Golden sample validated via simulation and destructive testing; version-controlled custody | Assurance that the reference standard won’t drift mid-program | Golden sample approval record with custody log and re-qualification triggers |

| Production QC | Route-controlled test sequence with unit-level data binding | Batch integrity records showing supplier accountability | QR-code or barcode-bound test data at IQC, IPQC, and FQC stations |

| Containment Readiness | Ability to trace defects to component lot and test station | Warranty liability protection through targeted isolation | Corrective-action process with demonstrated traceability depth |

| Material Flow and Process Control | Process control and tolerance tracking | Material flow stability and predictable execution | FIFO discipline, inspection gates, and documented change control |

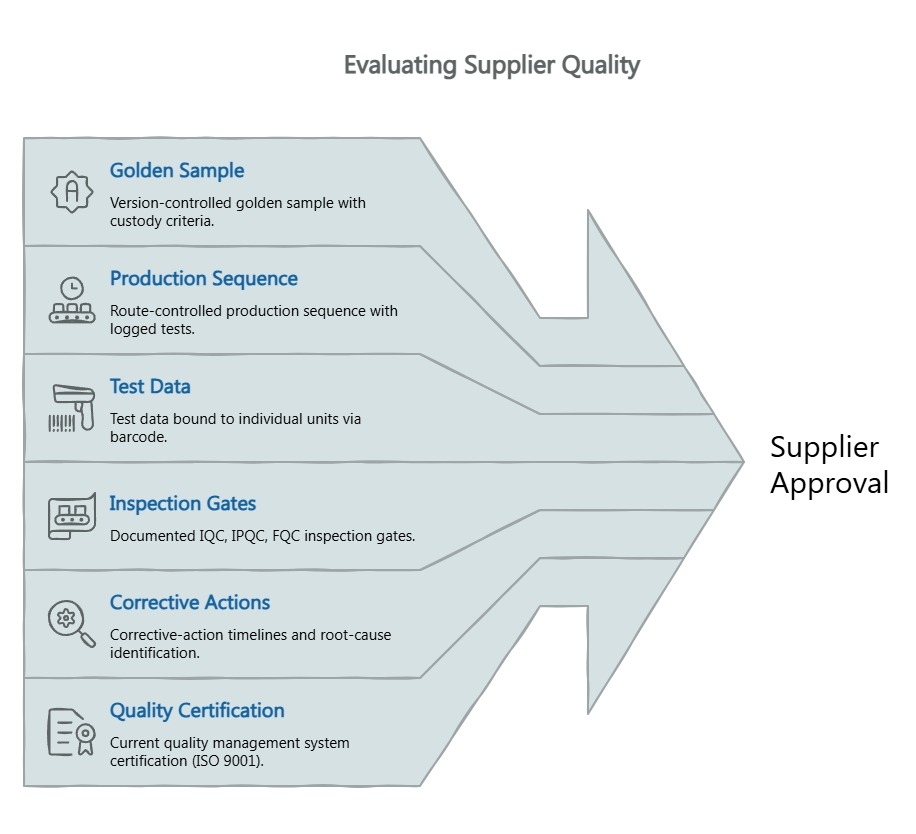

Supplier Evidence Request Checklist

Use this when preparing an RFQ, conducting a supplier audit, or reviewing a vendor for program approval.

- Does the supplier maintain a version-controlled golden sample with documented custody and re-qualification criteria?

- Can the supplier demonstrate a route-controlled production sequence where each unit’s test progression is logged?

- Is test data bound to individual units via barcode or QR code, or only tracked at the batch level?

- Does the supplier operate documented IQC, IPQC, and FQC inspection gates with defined accept/reject criteria?

- Can the supplier provide corrective-action timelines and demonstrate root-cause identification speed?

- Does the supplier hold a current quality management system certification (such as ISO 9001:2015)?

- Can the supplier show reliability testing evidence — both destructive and long-term endurance — for the specific product category?

- How are engineering changes documented, approved, and cut into production?

- Can the supplier isolate suspect units without treating the full program as one undifferentiated batch?

Definition-of-Ready Scorecard

Before approving scale-up, both Procurement and QA should independently confirm each item. If either function cannot confirm, the program is not ready.

| Readiness Criterion | QA Confirms | Procurement Confirms |

|---|---|---|

| Design spec has a documented, repeatable test protocol | ☐ | ☐ |

| Golden sample is validated, version-controlled, and in documented custody | ☐ | ☐ |

| Production line demonstrates route-controlled QC with unit-level traceability | ☐ | ☐ |

| Supplier can demonstrate corrective-action capability with component-level granularity | ☐ | ☐ |

| Reliability test data exists for the product category (destructive and long-term) | ☐ | ☐ |

| Change handling is controlled before scale-up, not after confusion appears | ☐ | ☐ |

| Both functions agree on what “launch-ready” means in writing | ☐ | ☐ |

Language Translation Layer

The same evidence often needs two vocabularies. This reference helps internal champions translate between functions without losing precision.

When QA says “testing route control,” Procurement hears “supplier accountability.” Both mean: the factory can prove which tests each unit passed.

When QA says “tolerance tracking,” Procurement hears “batch integrity.” Both mean: production output stays within the approved specification window.

When QA says “data-binding,” Procurement hears “warranty liability protection.” Both mean: if a defect surfaces in the field, the supplier can trace it to a specific production run and component lot.

When QA says “golden sample deviation,” Procurement hears “containment risk.” Both mean: production has drifted from the approved reference, and the scope of affected inventory is uncertain.

The bridge sentence is this: if the supplier can prove that the right checks happened on the right units at the right time, QA gains verification confidence and Procurement gains decision confidence.

What Good Supplier Proof Looks Like Before You Scale

Asking a supplier “Do you have quality control?” is not due diligence. Asking “Can you show me the QC gate records for units 4,700 through 4,850 from last Tuesday?” is. The difference is specificity.

Traceable testing route. A qualified amplifier manufacturer should demonstrate that production units move through a defined sequence of test stations — and that progression is enforced, not optional. In practice, this means barcode or QR-code scanning at each station, with the system blocking advancement if a unit hasn’t logged a pass at the previous gate.

Golden sample governance. The sample that defines “correct” must itself be rigorously validated — through finite element simulation, KLIPPEL-class measurement for acoustic and electrical parameters, then destructive and long-term power testing to confirm reliability. Once validated, the sample enters a custody protocol with documented storage conditions and triggered re-qualification events — typically after any engineering change or component substitution.

IQC/IPQC/FQC evidence. Three-stage inspection is a widely accepted framework, but the value is in the records, not the labels. Ask for accept/reject criteria at each gate, the sampling methodology, and the data trail. IQC catches component-level problems before they enter the line. IPQC catches assembly and process drift while correction is still inexpensive. FQC confirms that finished units match the golden sample within tolerance.

Reliability and corrective-action readiness. A supplier’s reliability laboratory should produce evidence, not just exist on a facility tour. For Procurement, the question is whether the supplier tests for failure modes that drive warranty exposure — thermal stress, long-term power endurance, mechanical fatigue. For QA, the question is how quickly corrective action reaches the production line when a failure is identified.

Sample-to-production consistency. Can the supplier demonstrate, with data, that mass-production units match the approved sample? This is where KLIPPEL QC measurement — comparing production output against golden sample parameters — combined with ERP/WMS-managed FIFO inventory control creates the evidence chain. Without this link, mass-production spec-drift stays invisible until it surfaces as a field failure.

Good proof should let you answer three questions without hand-waving: What was validated? How was it controlled in production? How will the supplier isolate and explain a failure if one appears? That final question matters more than many teams admit. Traceability shortens the distance between a problem and an answer. That is not a luxury in launch conditions. It is operational stability.

For additional evaluation depth, see OEM amplifier supplier due diligence and aligning engineering and procurement priorities.

The Discipline That Protects Your Brand Before Launch

The friction between Procurement and QA is not a personality conflict. It’s a structural problem — two functions using different evidence standards to answer the same question: Is this supplier ready?

The framework above replaces ambiguity with shared gates, shared vocabulary, and shared proof standards. When both teams evaluate a supplier against the same evidence checklist, disagreements surface real gaps instead of creating political standoffs.

That conference room from the opening doesn’t have to split down the middle. With a shared definition of launch readiness — documented, auditable, and specific enough to survive a vendor review — Procurement’s timeline confidence and QA’s verification depth become reinforcing strengths. When both functions use the same proof standard, the supplier is no longer judged by speed alone or by technical posture alone. The supplier is judged by whether the approved design can survive the handoff into controlled, auditable production. That is the shared logic. Practical. Defensible. Clean.

If this framework sharpened how you evaluate supplier readiness for your next private-label program, consider subscribing to the China Future Sound newsletter for continued insights on aligning engineering and procurement priorities, DFM checklists for pro audio NPI, and evidence-based supplier evaluation.

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the China Future Sound Insights Team:

The China Future Sound Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.