📌 Key Takeaways

Manufacturability engineering protects your launch schedule by catching production problems early—when fixes cost hours, not months.

- Golden Samples Lie: A hand-built prototype works because engineers selected perfect parts and ideal conditions—production lines pull from the full range of “good enough,” which creates surprises at scale.

- Prevention Beats Correction 10-to-1: Spending 10 days on design-for-manufacturing reviews typically prevents 100-day recovery cycles when problems surface during production ramp.

- Use Evidence, Not Arguments: When teams disagree on whether a program is ready, whoever has documented proof—test data, supplier confirmations, gate artifacts—wins the decision.

- Frame DFM as Schedule Insurance: Sourcing teams hear “delay” when engineering asks for review time; reframe requests as “buying certainty” to protect the timeline everyone actually wants to keep.

- Artifacts Outlive Gates: The thermal margins, tolerance analyses, and test coverage maps from DFM become the baseline for every future quality decision, supplier audit, and engineering change.

The fastest schedule is the one you can actually keep.

Product managers justifying engineering investment and sourcing directors evaluating program timelines will find a shared decision framework here, preparing them for the detailed implementation guidance that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

It’s 9:00 AM on a Tuesday.

The EVT test results just landed, and thermal failures have appeared across three of the six prototype units. The launch window—already tight—now looks uncertain. The Sourcing Director evaluates the risk of proceeding: bypass the DFM gate and risk a total yield collapse at PVT, or enforce a pause to address the thermal delta before the tooling budget is committed.

We saved two weeks by skipping the DFM review. Now we’re about to lose two months.

This moment plays out across audio brand programs more often than anyone admits. The conflict isn’t between good people and bad decisions—it’s between two legitimate priorities that never got aligned. Sourcing optimizes for velocity. PM and Engineering optimize for controllable risk. Without a shared framework, both sides are right, and both sides lose.

Manufacturability engineering is the cross-functional discipline that ensures a product can be built repeatedly at target quality and scale, using real processes, real suppliers, and real test methods. It translates design intent into production-ready specifications, process controls, and validation gates so teams avoid late-stage rework, unstable yields, and schedule risk during ramp.

This article provides the framework: a justification model and alignment matrix that positions manufacturability engineering as what it actually is—schedule insurance, not schedule drag.

Manufacturability Engineering in Pro Audio: What It Is and Why It Becomes a Stakeholder Conflict

Manufacturability engineering validates that a design can be built consistently, at scale, within the tolerances and process capabilities of actual production—not just in an engineering lab with hand-selected components and unlimited attention.

At the highest level, this discipline covers five domains: assembly feasibility, process capability and yield risks, testability and inspection strategy, supplier readiness, and scale transitions from EVT through DVT to PVT. In pro audio programs specifically, these domains translate into concrete workstreams.

What to include in a manufacturability business case:

Thermal and power dissipation validation under production enclosure conditions, not open-bench testing. Tolerance stack analysis accounting for supplier component variation across lots. Testability coverage confirming which parameters can be verified at scale and which cannot. Assembly variability assessment for manual operations that affect yield. Process capability mapping to actual supplier equipment and personnel. Change-control readiness establishing ECO/ECR workflows before they’re needed. Handoff documentation creating artifacts that downstream QA can actually use.

The question manufacturability engineering answers is simple: Will the production line deliver what the prototype promised?

The stakeholder conflict emerges because these activities require time—and time is the currency Sourcing is measured on. When a program plan shows “DFM Review: 2 weeks,” Sourcing sees schedule cost. When PM sees the same line item, they see the insurance policy that prevents a twelve-week rework cycle in PVT.

Neither perspective is wrong. The problem is that without a shared justification model, the debate becomes positional rather than evidence-based. Sourcing asks, “Why are we adding time?” PM struggles to quantify the disaster that didn’t happen. The result is either rushed programs that blow up late or endless negotiation that erodes cross-functional trust.

The core thesis is straightforward: manufacturability engineering is governance, not bureaucracy. It forces the hard questions early, when design changes cost hours instead of months and when supplier alignment is still achievable. The goal isn’t to slow programs down—it’s to protect the schedule that actually matters: the one you can keep.

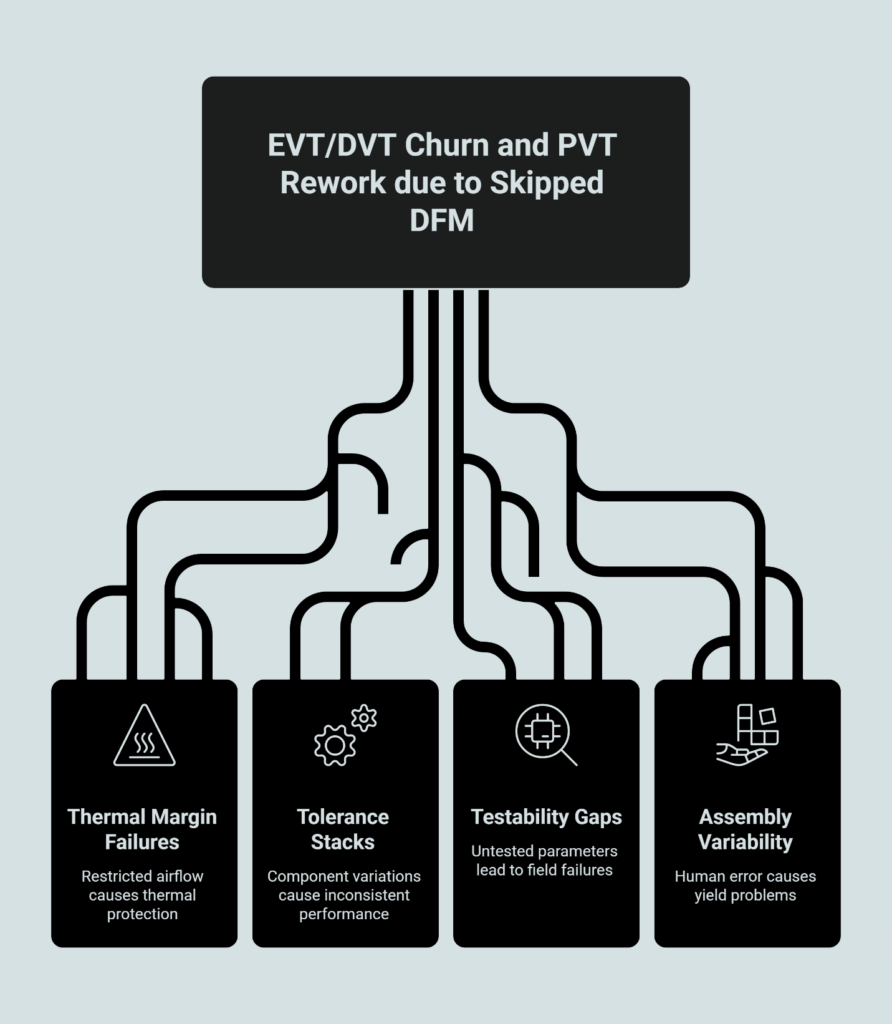

The Failure Pattern: How “Skip DFM” Turns Into EVT/DVT Churn and PVT Rework

The failure pattern follows a predictable arc, and it starts with a reasonable-sounding assumption: The prototype works. Why wouldn’t production work too?

This is the golden sample trap. A hand-built prototype with carefully selected components, assembled by senior engineers under ideal conditions, proves capability once. It does not prove consistency at scale. The gap between “this unit works” and “every unit works” is precisely what manufacturability engineering exists to close.

When teams skip or compress DFM activities, the consequences surface predictably—just at the worst possible time.

Thermal margin assumptions fail first. The design specification may indicate the amplifier can handle sustained output at an ambient 40°C. However, without manufacturability validation of the final enclosure’s airflow and thermal interface material application, production units can trigger thermal protection at as low as 35°C. This 5°C delta typically stems from the transition from open-bench “ideal” convection to the restricted airflow of a sealed production chassis, illustrating the critical need for in-situ thermal testing during DVT.

Tolerance stacks compound. Every component in the BOM has a tolerance band. When engineers select components for prototypes, they often grab parts from the center of that band—average values, predictable behavior. Production pulls from the full distribution. When multiple tolerances stack in the same direction, assemblies that worked perfectly in the lab produce inconsistent performance in volume. The customer hears it as “unit-to-unit variation.” The warranty system logs it as returns.

Testability gaps emerge under pressure. Manual inspection catches obvious defects. Automated test coverage catches systematic ones. The question manufacturability engineering answers is: Which quality-critical parameters can we actually verify at production speeds, and which are we hoping for? When that question goes unanswered, programs ship with untested assumptions. Field failures follow.

Assembly variability multiplies. Every manual operation introduces variance. Torque specifications, wire routing, thermal compound application, connector seating—each step performed by a human operator hundreds of times per shift drifts toward the edges of acceptable. Without DFM analysis identifying which operations are critical and which are forgiving, yield problems appear as a background rate of failures that erodes margin without ever triggering a single dramatic event.

The downstream effect is a familiar trap: “just one more build” to validate the fix, then “just one more” again. Each loop consumes calendar time and compresses the ramp window, increasing pressure on every function.

The time-based heuristic observed in pro-audio program postmortems aligns with the “Rule of Ten” in quality management: a 10-day DFM allocation typically prevents a 100-day (14-week) recovery cycle should errors reach PVT. This 1:10 ratio aligns with the standard ‘Rule of Ten’ in quality management the exponential cost-of-change curve identified in systems engineering, where the cost and time required to rectify a defect increases by an order of magnitude at each subsequent phase of development—from design to prototype to production. While the exact duration scale depends on the complexity of the PCBA and enclosure, the 1:4 prevention-to-correction ratio remains a reliable benchmark for schedule protection. Prevention costs less than correction. The cost-of-change curve that governs software development applies with equal force to hardware programs—earlier feedback loops produce dramatically lower total program cost.

The failure pattern isn’t mysterious. It’s just invisible until it isn’t.

The Justification Model: A One-Page Template PMs Can Use with Sourcing

The challenge for Product Managers isn’t knowing that DFM matters—it’s justifying the time investment in language that resonates with Sourcing priorities. The following canvas provides that translation layer: a structured template that frames manufacturability engineering in terms of program risk, schedule certainty, and evidence-based gates.

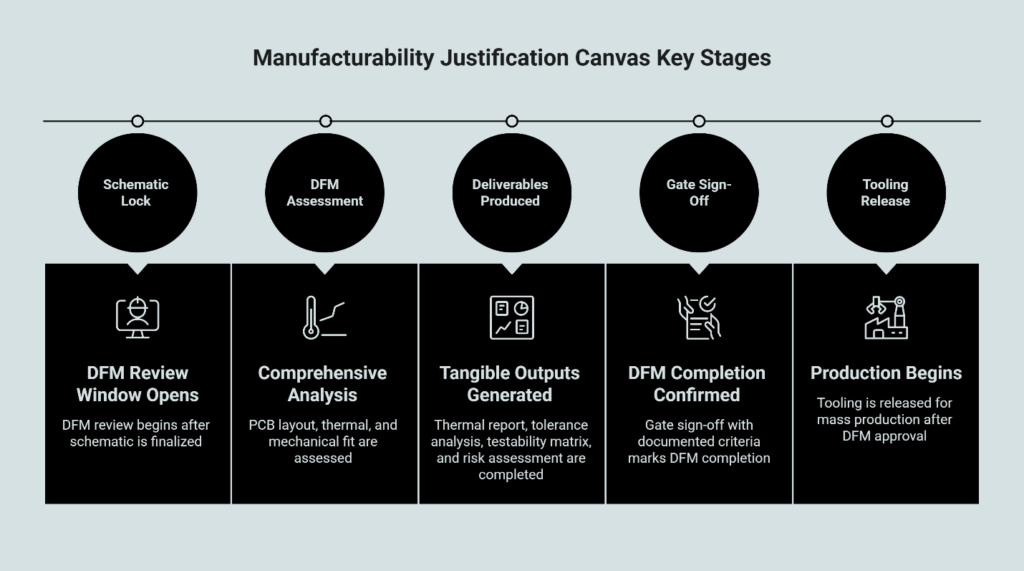

Manufacturability Justification Canvas

Decision Request What you are asking to fund or allow. Be specific: “A 10-day DFM review window between schematic lock and tooling release” provides an actionable gate for typical Class-D amplifier sub-assemblies. This window allows for a comprehensive “Level 2” DFM assessment—covering PCB layout, thermal boundary conditions, and mechanical fit—which is essential to protecting the subsequent 90-day production ramp. For high-complexity systems with integrated wireless or high-density interconnects, this window should be adjusted based on the number of critical-to-quality parameters identified in the design phase.

Scope Boundaries What is included and what is explicitly excluded. Define the activities, the deliverables, and the exit criteria. Scope creep kills DFM credibility faster than anything else.

DFM Deliverables The tangible outputs the program will receive: thermal validation report with margin analysis, tolerance stack analysis for critical assemblies, testability coverage matrix showing what’s verified versus assumed, assembly risk assessment for yield-critical operations, and supplier capability confirmation for key processes.

Program Risks Being Avoided Translate engineering concerns into program language: schedule slip from late-stage redesign, quality escapes requiring field containment, uncontrolled ECO churn after tooling, yield collapse at ramp requiring production holds, and team credibility risk with downstream stakeholders who depend on the committed timeline.

Evidence Signals What proof will be produced to confirm DFM completion: gate sign-off with documented criteria, test readiness indicator, supplier capability confirmation letters, and first-article exit criteria defined before production.

Definition of “DFM Done” The specific, measurable criteria that end the DFM phase: all critical parameters have verified test coverage, thermal margin meets or exceeds the defined threshold under production conditions, tolerance analysis shows Cpk at or above target for critical dimensions, and assembly work instructions have been validated by production trial.

Timeline Impact Framing Position the request in schedule-protection terms: “We are buying schedule certainty, not adding bureaucracy. A 10-day to 14-day investment protects the 90-day ramp.”

Answering Sourcing Objections

When presenting this canvas, anticipate the objections and prepare evidence-based responses.

“We don’t have time for this.” The schedule you’re protecting is the one you can actually keep. Programs that skip DFM average significant PVT rework cycles. You’re trading a controlled 10-day window for an uncontrolled 8-week recovery.

“The prototype works—why wouldn’t production?” The prototype proves capability. DFM proves consistency at scale. These are different questions with different evidence requirements.

“This feels like scope creep.” That’s why explicit exit criteria exist. DFM is done when specific conditions are met—not when engineering feels comfortable.

“Can’t we do this in parallel with tooling?” Some activities can run in parallel. The ones that can’t are the ones that would require tooling changes if problems surface. Sequencing here protects tooling investment.

Objection Map Reference

| Stakeholder | Common Objection | What to Validate | Practical Response |

|---|---|---|---|

| Sourcing | “This slows down supplier selection.” | Which risks can only be discovered after supplier/process selection? | Early manufacturability defines process requirements so supplier selection is less likely to reset later. |

| PM | “Scope creep will derail the roadmap.” | What evidence is truly critical vs. optional? | Use a one-page definition of done plus gates; only critical evidence blocks progression. |

| Engineering | “DFM becomes paperwork.” | Which outputs change decisions? | Focus on buildability risks, test readiness, and change control—artifacts tied to gates, not documentation volume. |

The canvas works because it translates engineering judgment into program governance. It gives Sourcing a document they can review, challenge, and ultimately approve—rather than a vague request for “more time.”

Shared Decision-Making: An Alignment Matrix for Sourcing, PM, and Engineering

Even with a strong justification model, programs need a mechanism for resolving disputes when stakeholders disagree. The following alignment matrix establishes shared criteria, evidence requirements, and operating cadence that depersonalizes conflict and focuses attention on program outcomes.

Decision Criteria

| Criteria | What It Measures | Evidence That Resolves Disputes |

|---|---|---|

| Schedule Certainty | Confidence that the committed ship date is achievable | Gate artifacts completed; no open red-flag items; supplier commitments documented |

| Production Stability | Likelihood of consistent yield at ramp volumes | Pilot run data; process capability indices; assembly trial results |

| Testability Readiness | Coverage of quality-critical parameters in production test | Test coverage matrix; correlation data between bench and production test |

| Change-Control Maturity | Ability to execute ECOs without disrupting production | ECO workflow documented; cut-in plan template ready; supplier notification process confirmed |

| Supplier Capability Signals | Confidence that suppliers can deliver to spec at volume | Process capability data; equipment qualification records; first-article approval criteria met |

Resolution Rules

When stakeholders disagree on program readiness, the dispute resolves through evidence, not position.

If Sourcing believes the program is ready to advance and Engineering disagrees, Engineering must produce the specific gate artifact that is incomplete or the specific risk that is unmitigated. If the artifact exists and meets criteria, the program advances.

If Engineering believes more time is needed and Sourcing disagrees, Engineering must quantify the risk in program terms—schedule exposure, yield risk, containment cost—and propose a bounded remediation. Sourcing evaluates the trade-off explicitly.

If PM must arbitrate, the decision defaults to the evidence. Whoever has documented proof—artifacts, data, supplier confirmation—carries the decision. Assertions without evidence do not carry weight.

Operating Cadence

Manufacturability gates work when they are binary enough to enforce and lightweight enough to run.

Single Owner: One person owns the DFM gate, typically the PM or a designated program manager. The owner convenes reviews, documents decisions, and escalates unresolved disputes.

Sign-Off Process: Gate advancement requires explicit sign-off from Sourcing, Engineering, and PM. Silence is not consent. A stakeholder who does not respond within the defined window is escalated, not assumed to agree.

Stop-the-Line Triggers: Define in advance what conditions halt program progression regardless of schedule pressure: safety-critical test failure, supplier inability to demonstrate process capability, yield below threshold in pilot run, or open ECO affecting form, fit, or function.

Rhythm: Weekly 30-minute manufacturability review covering top risks, evidence status, and blockers. Build-cycle retrospectives capturing what surprised the team and what becomes a reusable checklist item. Change-control touchpoints addressing substitutions, supplier process changes, and spec clarifications.

This matrix aligns with frameworks used in OEM amplifier supplier alignment and ensures that cross-functional disputes resolve through shared criteria rather than organizational politics.

How Manufacturability Engineering Sets Up Quality Gates and Acceptance Criteria

The artifacts produced during DFM don’t disappear after the gate closes. They become the foundation for every downstream quality decision—from first-article approval through end-of-line testing and ongoing supplier governance.

When DFM is executed properly, the handoff to production includes:

Defined acceptance criteria. The first-article approval process requires specific, measurable thresholds. DFM produces those thresholds: thermal margins, test limits, yield targets, cosmetic standards. Without DFM, first-article criteria become negotiated opinions rather than engineering requirements.

Test coverage that matches production capability. DFM identifies which parameters can be verified by automated test equipment and which require sampling or alternative verification. This coverage map becomes the basis for production test plans and ongoing quality monitoring.

Change-control baseline. When an ECO becomes necessary—and ECOs always become necessary—the DFM artifacts provide the baseline for impact assessment. What was the original thermal margin? What tolerance assumptions drove the design? Without this baseline, every change becomes a research project.

Supplier qualification criteria. The DFM inputs that most affect yield translate directly into supplier qualification requirements. Thermal margin assumptions become environmental test requirements. Process capability targets become supplier audit criteria. The governance story that starts with DFM extends through the entire OEM/ODM manufacturing process.

The continuity matters because quality problems rarely announce themselves as DFM failures. They appear as yield issues, as field returns, as customer complaints that seem unrelated to any specific decision. Tracing those problems back to root cause requires documentation that only exists if someone created it during DFM.

Organizations that treat quality management systems as paperwork exercises miss this connection. The value isn’t the certificate on the wall—it’s the discipline of documenting decisions when they’re made so they can be understood when they matter.

The cost of quality framework distinguishes between prevention costs, appraisal costs, and failure costs. Manufacturability engineering is prevention. It’s cheaper than appraisal, and it’s dramatically cheaper than failure. But the savings only materialize if the artifacts persist—if the DFM work creates a governance trail that connects design intent to production reality.

The business case for manufacturability engineering isn’t about adding process. It’s about protecting the schedule you’ve committed to, reducing the risks your stakeholders can’t see, and creating the documentation that makes downstream quality decisions possible.

For Sourcing Directors evaluating program timelines: the fastest schedule is the one you can keep. DFM buys certainty.

For Product Managers justifying engineering investment: the canvas and matrix in this article give you the tools to make that case in language that resonates across functions.

For teams building pro audio programs that demand reliable, consistent quality: the governance starts here, before tooling, before supplier commitments, before the calendar makes every problem expensive.

Review our amplifier production capabilities to understand how disciplined manufacturing processes support the programs you’re planning.

Our Editorial Process

We develop manufacturing guidance by combining internal process knowledge and documented capabilities, review of relevant industry standards and reputable references, and editorial review for clarity, accuracy, and decision usefulness. When we cite data or standards, we link to the original publisher. When specific metrics are not available, we avoid numeric claims and instead explain the decision logic, trade-offs, and risks decision-makers should evaluate.

By: China Future Sound — Engineering & Program Team.

We work with professional audio brands and distributors on OEM/ODM manufacturing programs, translating design intent into production-ready builds through structured handoffs and quality gates.