📌 Key Takeaways

Score suppliers on proof of consistent production, not how impressive the factory looks during a tour.

- Track Every Unit by Code: Barcode or QR-code systems that tie test results to each product catch quality drift before it ships.

- Watch Material Flow, Not Slidedecks: Walk through the actual inventory system during audits — spreadsheet-only tracking is a warning sign.

- Catch Problems Mid-Line: Factories that rely mainly on final inspection push too much risk downstream to your brand.

- Samples Aren’t Enough: A perfect prototype means nothing without systems that keep thousands of units matching that standard.

- Score Evidence, Not Confidence: A supplier showing logged data beats one describing “rigorous processes” every time.

The real question isn’t “Does this factory look good?” — it’s “Can they hold spec when volume and pressure hit at once?”

Procurement leads, operations directors, and QA managers evaluating audio equipment suppliers will sharpen their audit focus here, preparing them for the detailed scorecard framework that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

The facility looked great on camera.

Clean production floors, a confident plant manager, a golden sample that sounded perfect. Everything checked out — until the third production run shipped with a sensitivity variance across half the batch, and warranty exposure started climbing.

For Procurement Leads, Operations Directors, and QA Managers at audio brands and distribution businesses, the gap between a supplier’s presentation and their production discipline is where real risk hides. Spec-drift and batch-wide field failures don’t surface during factory tours. They show up months later, buried in field-failure data.

Most supplier scorecards score the wrong things. A scorecard built around process governance rather than surface impressions can spot warning signs before they become warranty pain — and shortlist OEM partners who actually hold spec at volume.

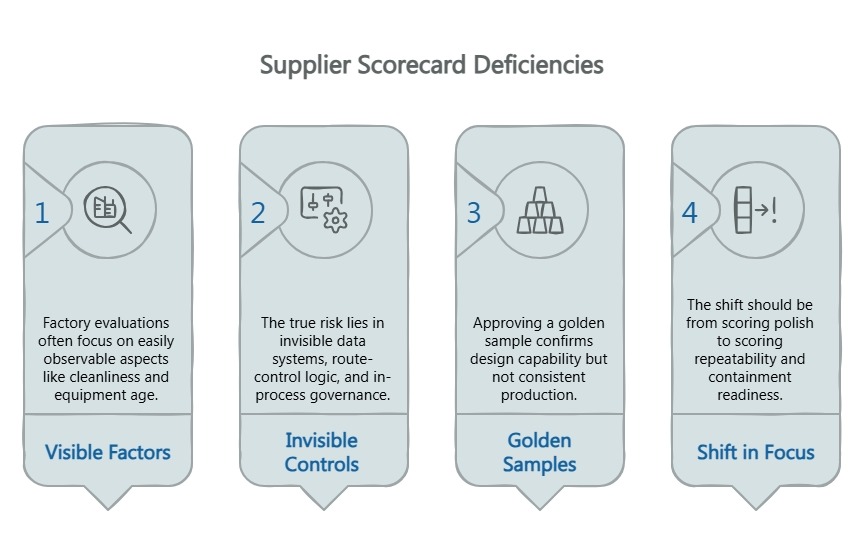

Why Most Supplier Scorecards Miss the Real Risk

A typical factory evaluation checklist over-indexes on things you can see: facility cleanliness, equipment age, whether the ISO9001 certificate is current. These are table stakes, not differentiators.

What separates a stable amplifier manufacturing partner from a future recall problem is largely invisible during a walkthrough. It lives in the data systems, the route-control logic, and the in-process governance that either catches tolerance drift early or lets it ship. A process-based quality system — the kind described in the ISO 9001 framework — structures these invisible controls. That principle is widely accepted across quality and supply-chain management, and it aligns with GS1 traceability guidance and NIST’s manufacturing traceability work through its supply-chain traceability framework. Those sources are useful context, but the certificate alone does not prove controls are active on the line.

Golden samples deserve the same scrutiny. Approving a sample confirms the design can produce the right output. It does not confirm the factory will produce it consistently across thousands of units.

The shift is straightforward. Stop scoring polish. Start scoring repeatability and containment readiness.

How to Use a Five-Step Scorecard Before Your Next Factory Shortlist or Audit

This framework works at three stages: initial shortlist comparison, on-site audit preparation, and post-visit debrief when comparing notes across factory visits.

Before the visit, it sharpens the evidence request list. During the visit, it keeps attention on proof instead of presentation. After the visit, it makes cross-supplier comparison easier by forcing the team to compare documented controls rather than memory.

Each step follows the same structure. Score each category independently, and apply one guiding rule throughout: score evidence quality, not confidence of delivery. A supplier saying “we do FIFO” is not the same as showing the system, the controls, and the exceptions workflow.

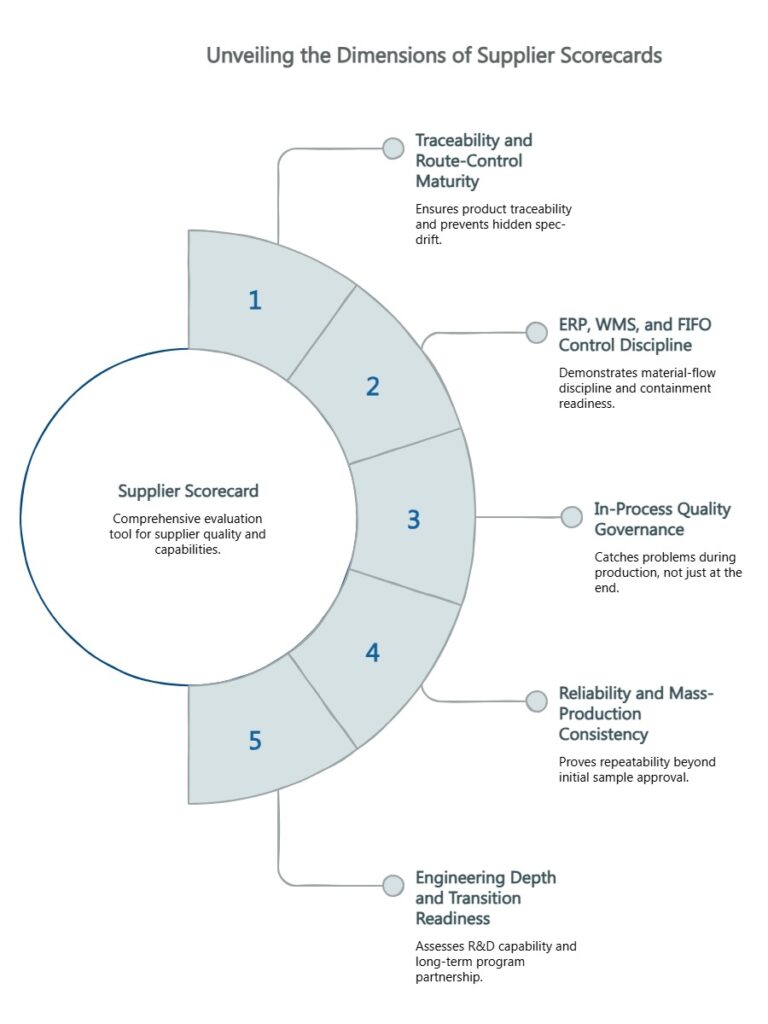

The Five-Step Scorecard

1. Traceability and Route-Control Maturity

This is the highest-signal criterion on the scorecard. A factory’s traceability infrastructure reveals whether it can prevent hidden spec-drift before shipment. If a supplier cannot tie test progression and results to specific units, hidden drift can move through the line until it becomes a field problem. Strong route control also reduces the chance of skipped gates under quota pressure.

What to evaluate: Barcode or QR-code control of the testing route, binding of test results to individual units, the ability to isolate defects without broad batch exposure, and evidence that operators cannot bypass critical gates under pressure. These controls, aligned with the GS1 Global Traceability Standard, form the backbone of a resilient quality architecture.

Evidence to request: A demonstration of barcode-routed test progression, sample traceability records, and unit-level data logging examples. A partner like China Future Sound uses barcodes and QR codes to bind test data to each unit — the kind of route control that makes recall containment surgical rather than catastrophic.

Red flags: Manual-only route tracking, no linkage between test records and serial numbers, or extensive golden-sample talk without enforcement controls.

2. ERP, WMS, and FIFO Control Discipline

Material-flow discipline is an early indicator of repeatability and containment readiness. Weak material governance usually surfaces later as inconsistency, rework, or slow root-cause response.

What to evaluate: ERP usage for material and production control, WMS coverage across raw materials, semi-finished goods, and finished products, verifiable FIFO enforcement, and visibility into lot movement and material status.

Evidence to request: An ERP or WMS walkthrough during the audit — not a PowerPoint summary. Examples of FIFO rules in practice and lot or stock-status tracking. Mature audio suppliers integrate ERP and WMS across the full production lifecycle with documented FIFO control.

Red flags: Spreadsheet-only inventory management, weak lot visibility, or FIFO processes described verbally but not verifiable in system records.

3. In-Process Quality Governance

A factory that leads its quality story with final inspection is telling you it catches problems at the end of the line rather than preventing them during production. That pushes too much risk downstream — ultimately to your brand.

What to evaluate: IPQC checkpoints during mass production, incoming quality controls through IQC, escalation paths for deviations, and the use of in-process evidence rather than end-of-line reassurance.

Evidence to request: IPQC check sheets, examples of stop-correct-resume logic, and a clear explanation of how deviations are contained. A robust quality system includes IQC, IPQC, FQC, and reliability-lab testing as layered gates — not a single final checkpoint.

Red flags: Final QA as the primary quality story, vague in-process criteria, or no clear containment logic. This is one of the clearest trade-offs in supplier evaluation: a supplier may look efficient on the surface while routing too much risk to the end of the line.

4. Reliability and Mass-Production Consistency

The gap between sample approval and mass-production consistency is where many OEM partnerships quietly fail. A supplier should be able to prove repeatability, not just sample approval.

What to evaluate: Consistency-control methods such as KLIPPEL QC or equivalent measurement systems, golden-sample governance tied to production benchmarks, reliability-lab testing, and destructive or long-term power testing where relevant.

Evidence to request: How golden-sample integrity is maintained across runs, reliability-test examples, and proof that approved samples are linked to mass-production controls. The best partners combine golden-sample benchmarks with active measurement systems — including tools like KLIPPEL R&D validation, AP testing, and long-term power tests — to catch drift before it ships.

Red flags: Golden-sample approval treated as the finish line, no reliability-lab capability, or no repeatability monitoring after the pilot stage.

5. Engineering Depth and Transition Readiness

You are not buying a production slot. You are buying long-term execution quality and the ability to evolve a product line without starting from scratch. This is where a supplier stops looking like a commodity source and starts looking like a long-term program partner.

What to evaluate: Cross-functional R&D capability spanning acoustics, electronics, structure, and software. The ability to translate design intent into scalable production. Pilot or sample-based validation support. Readiness for supplier transition and NPI risk reduction.

Evidence to request: Engineering-team scope, sample-to-pilot workflow, and examples of design simulation, prototyping, or test validation. Partners with R&D depth — and tools like finite element simulation, rapid prototyping, and AP test infrastructure — catch design-for-manufacturing problems before they reach the production line. An R&D team of over 20 people spanning acoustics, electronics, structure, and software specialists, backed by a 6-acre facility and a 300-person workforce producing 1,000 amplifiers daily, reflects the kind of engineering scale that supports program continuity.

Red flags: Commodity posture with weak engineering ownership, no credible path from sample to stable production, or little evidence of pre-production validation.

A Simple Way to Compare Suppliers Without Overcomplicating the Decision

The scorecard works best when you keep it simple. Use a table with five rows and five columns: evaluation category, what to verify, evidence to request, red flags observed, and your notes or score. Weight traceability, ERP/WMS/FIFO, and IPQC more heavily — they are the strongest predictors of production-phase risk.

Focus your notes on evidence quality rather than verbal assurances. A supplier who can show barcode-routed test data tied to serial numbers is giving harder proof than one who describes “rigorous quality processes” without system-level records. For a more granular framework, the Factory Evaluation for Amplifier Manufacturing: A Shareable Thirty-Point Checklist scores suppliers across people, process, equipment, quality, and compliance. For audit-specific preparation, The OEM Audit Checklist: 50 Questions to Ask Before Signing extends this framework into a structured pre-contract review.

Use this scorecard on your next two supplier reviews and compare the notes side by side. The gaps usually become obvious once the conversation moves from claims to evidence. The question is not “Does this factory look good?” — it is “Can this partner hold spec when pressure, volume, and change hit at the same time?”

For more sourcing and manufacturing guidance, explore the China Future Sound blog and subscribe for updates on quality frameworks and supplier evaluation tools. You can also visit the About Us and Contact pages for broader background on the company and direct outreach.

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the China Future Sound Insights Team

The China Future Sound Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.